Create a pipeline using the SPL2 Pipeline Builder

The SPL2 Pipeline Builder allows you to quickly create and configure pipelines using SPL2 (Search Processing Language) statements. Normally, you use SPL2 to search through indexed data using SPL2 search syntax. However, in the Splunk Data Stream Processor (DSP), you use SPL2 statements to configure the functions in your pipeline to transform incoming streaming data. Unlike SPL2 search syntax, DSP SPL2 supports variable assignments and require semicolons to terminate the statement. Use the SPL2 Pipeline Builder if you are already experienced with SPL2. You can also easily copy and share pipelines in the SPL2 Pipeline Builder with other users or get a quick overview of the configurations in your entire pipeline without having to manually click through each function in the canvas.

You can access the SPL2 Pipeline Builder in two ways:

- From the Data Stream Processor homepage, click Data Management > Create New Pipeline and then select the SPL2 Pipeline Builder.

- From the Data Stream Processor homepage, click Build Pipeline and select a source. From the Canvas Pipeline Builder, click SPL to toggle to the SPL2 Pipeline Builder.

The pipeline toggle button is currently a beta feature and repeated toggles between the two builders can lead to unexpected results. If you are editing an active pipeline, using the toggle button can lead to data loss.

Add a data source to a pipeline using SPL2

Your pipeline must start with the from function and a data source function. The from function reads data from a designated data source function. The required syntax is:

| from <source_function>;

If you've toggled over to the SPL2 Pipeline Builder from the Canvas Builder, then this step is done for you.

Add functions to a pipeline using SPL2

You can expand on your SPL2 statement to add functions to a pipeline. The pipe ( | ) character is used to separate the syntax of one function from the next function.

The following example reads data from the Read from Splunk Firehose source function and then pipes that data to the Where function. The where function filters for records with the "syslog" sourcetype.

| from read_splunk_firehose() | where source_type="syslog";

You can continue to add functions to your pipeline by continuing to pipe from the last function. The following example adds an Extract Timestamp function to your pipeline to extract a timestamp from the body field to the timestamp field.

| from read_splunk_firehose() | where source_type="syslog" | extract_timestamp field="timestamp" rules=[cisco_timestamp(), iso8601_timestamp(), rfc2822_timestamp(), syslog_timestamp()];

As you continue to add functions to your pipeline, click Start Preview to validate and compile your SPL2 statements and validate the function's configuration. Remember that you must terminate the end of your SPL2 statements with a semi-colon ( ; ).

Specify a destination to send data to using SPL2

When you are ready to end your pipeline, end your SPL2 statement with a pipe ( | ), the Into function, and a sink function. The into function sends data to a designated data destination (sink function).

In the following example, we are expanding on the previous SPL2 statements by adding a Write to the Splunk platform with Batching sink function. This sink function sends your data to an external Splunk Enterprise environment.

| from read_splunk_firehose() | where source_type="syslog" | extract_timestamp field="timestamp" rules=[cisco_timestamp(), iso8601_timestamp(), rfc2822_timestamp(), syslog_timestamp()] | into splunk_enterprise_indexes(

"ec8b127d-ac69-4a5c-8b0c-20c971b78b90",

cast(map_get(attributes, "index"), "string"),

"main",

{"async": "true", "hec-enable-ack": "false", "hec-token-validation": "true", "hec-gzip-compression": "true"},

"100B",

5000);

Click Start Preview to validate and compile your SPL2 statements and validate the function's configuration. Remember that you must terminate the end of your SPL2 statements with a semi-colon ( ; ).

Branch a pipeline using SPL2

You can also perform different transformations and send your data to multiple destinations by branching your pipeline. In order to branch your pipeline, declare a variable and assign the functions you want to share between branches to that variable. Invoke the declared variable to define each branch in your pipeline using an SPL2 statement that terminates with a semi-colon ( ; ). Variable names must begin with a dollar sign ($) and can only contain letters, numbers, or underscores.

The following example adds a branch to the pipeline from the previous example. The $statement_1 variable is declared and the Read from Splunk Firehose source function along with the Where function are assigned to the variable. Then the variable is invoked to build the pipeline branches.

$statement_1 = | from read_splunk_firehose() | where source_type="syslog";

| from $statement_1

| extract_timestamp field="timestamp" rules=[cisco_timestamp(), iso8601_timestamp(), rfc2822_timestamp(), syslog_timestamp()]

| into splunk_enterprise_indexes("ec8b127d-ac69-4a5c-8b0c-20c971b78b90", cast(map_get(attributes, "index"), "string"), "main", {"async": "true", "hec-enable-ack": "false", "hec-token-validation": "true", "hec-gzip-compression": "true"}, "100B", 5000);

| from $statement_1

| to_splunk_json index = cast(map_get(attributes, "index"), "string")

| batch_bytes bytes = to_bytes(json) size="2MB" millis=5000

| into splunk_enterprise("SPLUNK_CONNECTION_ID", "INDEX", bytes);

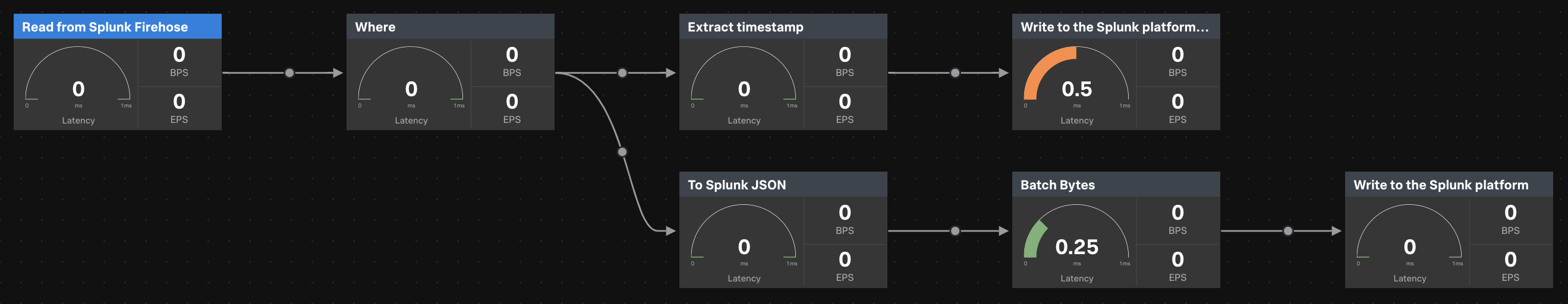

The first branch reads records that have source_type matching syslog from Splunk Firehose and extracts timestamp from the records to a top-level field. See Extract Timestamp for more details. The transformed records are then sent to Splunk Enterprise using the Write to the Splunk platform sink function.

The second branch also reads records that have source_type matching syslog from Splunk Firehose but transforms the data using the To Splunk JSON and Batch Bytes streaming functions. The data is then sent to Splunk Enterprised using a separate Write to the Splunk Platform sink function from the first branch.

Click Start Preview to compile your SPL2 statements and validate the pipeline's configuration. Here's what the pipeline from the example looks like:

Differences and inconsistent behaviors between the Canvas Builder and the SPL2 Pipeline Builder

You can toggle between the Canvas builder and the SPL2 Pipeline Builder. There are several differences between the Canvas Builder and the SPL2 Pipeline Builder.

Differences

| Canvas Builder | SPL2 Pipeline Builder |

|---|---|

| Accepts SPL2 Expressions as input. | Accepts SPL2 Statements as input. SPL2 statements support variable assignments and must be terminated by a semi-colon. |

| For source and sink functions, optional arguments that are blank are automatically filled in with their default values. | If you want to specify any optional arguments in source or sink functions, you must provide all arguments up until the optional argument that you want to omit, and you must omit all subsequent optionals. |

| When using a connector, the connection name can be selected as the connection-id argument from a dropdown in the UI. | When using a connector, the connection-id must be explicitly passed as an argument. You can view the connection-id of your connections by going to the Connections Management page. |

Source and sink functions have implied from and into functions respectively.

|

Source and sink functions require explicit from and into functions.

|

| You can stop a preview session at any time in the Canvas Builder by clicking Stop Preview. | Once you click Build, the resulting preview session cannot be stopped. To stop the preview session, switch over to the Canvas Builder. |

Toggling between the SPL2 Pipeline Builder and the Canvas Builder (BETA)

The toggle button is currently a beta feature. Repeated toggles between the Canvas Builder and the SPL2 Pipeline Builder may produce unexpected results. The following table describes specific use cases where unexpected results have been observed.

If you are editing an active pipeline, toggling between the Canvas and the SPL builders can lead to data loss as the pipeline state is unable to be restored. Do not use the pipeline toggle if you are editing an active pipeline.

| Pipeline contains... | Behavior |

|---|---|

| Variable assignments | Variable names are changed and statements may be reordered. |

| Renamed function names | Functions are reverted back to their default names. |

| A source or sink function with optional arguments left blank | Optional arguments are set to their default values when toggling from the Canvas builder to the SPL2 builder. |

| Comments | Comments are stripped out when toggling between builders. |

| A stats or aggregate with trigger function that uses an evaluation scalar function within the aggregation function. | Each function is called separately when toggling from the SPL2 builder to the Canvas builder. For example, if your stats function contained the if scalar function as part of the aggregation function count(if(status="404", true, null)) AS status_code, the Canvas Builder assumes that you are calling two different aggregation functions: count(if(status="404") and if(status="404") AS status_code both of which are invalid.

|

Additional resources

- See the Function Reference for a list of available functions and examples of how to use those functions in SPL2.

- See the SPL2 Search Manual to learn more about SPL2.

Even though DSP supports SPL2 statements, there are major differences in terminology, syntax, and supported functions. For more information, see SPL2 for DSP.

| Create a pipeline using the Canvas Builder | Create a pipeline using a template |

This documentation applies to the following versions of Splunk® Data Stream Processor: 1.1.0

Download manual

Download manual

Feedback submitted, thanks!