GCP prerequisites for Data Manager

A GCP admin completes prerequisites ahead of time so that a Splunk Admin can use Data Manager for onboarding. Alternatively, a GCP admin can complete the entire process. Data Manager contains optional steps to guide you through this choice.

Common Information Model prerequisites

Data Manager supports Common Information Model (CIM) normalization for Google Cloud platform inputs when the Splunk Add-on for Google Cloud Platform is installed on the part of your Splunk Cloud deployment that performs the parsing or search-time functionality for your data. This add-on must be installed, but does not need to be configured.

Download version 4.1.0 or later of the Splunk Add-on for Google Cloud Platform from Splunkbase.

For more information, see the Splunk Add-on for Google Cloud Platform documentation manual.

For information on the CIM, see the Overview of the Splunk Common Information Model topic in the Common Information Model Add-on manual.

Permissions prerequisites

GCP permissions are required in order to set up the prerequisites needed to onboard GCP logs. If you or your GCP administrator encounter any permission issues, verify that the GCP user has the associated permissions to perform the corresponding actions on GCP.

- Navigate to console.cloud.google.com, and log into the Google account where you want to configure your GCP service accounts, and set up your GCP credentials.

- Create a role, if you have not already done so and enable the following permissions that the service account needs to run the Terraform template:

dataflow.jobs.cancel dataflow.jobs.create dataflow.jobs.get iam.roles.get iam.roles.create iam.roles.list iam.roles.undelete iam.roles.update iam.roles.delete iam.serviceAccounts.actAs logging.logEntries.create logging.sinks.create logging.sinks.delete logging.sinks.get pubsub.subscriptions.consume pubsub.subscriptions.create pubsub.subscriptions.delete pubsub.subscriptions.get pubsub.subscriptions.update pubsub.topics.attachSubscription pubsub.topics.create pubsub.topics.delete pubsub.topics.get pubsub.topics.getIamPolicy pubsub.topics.setIamPolicy resourcemanager.projects.get resourcemanager.projects.getIamPolicy resourcemanager.projects.setIamPolicy storage.buckets.create storage.buckets.delete storage.buckets.get storage.objects.create storage.objects.delete storage.objects.get storage.objects.list

- Grant your service account access to the projects where you want to collect data.

- (Optional) Grant users access to your service account.

- Create a service account for Terraform provisioning.

Ask your GCP admin to create a service account for Data Manager to use to query the status of your deployment. No IAM role needs to be attached to this service account at this point.

When you run the terraform template after the input is created, an IAM role for each project with the correct permission set will be attached to this service account. - Grant your service account access to the projects where you want to collect data.

- (Optional) Grant users access to your service account.

- Enable APIs in your Google Cloud project.

Ask your GCP admin to enable the following services/API for the GCP project that will be used to send your data to Splunk Cloud:

- Cloud Pub/Sub API

- Compute Engine API

- DataFlow API

- IAM API

- Create a Google Cloud Storage (GCS) bucket where the terraform state will be stored.

Note: If you have already created a bucket that you can use for Terraform state management, you can skip this step.

Roles prerequisites

The following are default GCP roles with above permissions, ensure at least one of the following is bound to the service account or user trying to enable GCP service APIs:

Roles

- Service Config Editor

- Editor

- Owner

- Storage Admin

GCP data source prerequisites

Some GCP data sources only need to be selected during onboarding, but others need to be configured ahead of time.

Configure Data Access Logs

If you select Data Access Logs as a data source, see https://cloud.google.com/logging/docs/audit/configure-data-access on Google Cloud.

Configure Access Transparency Logs

If you select Access Transparency Logs as a data source, see https://cloud.google.com/cloud-provider-access-management/access-transparency/docs/enable on Google Cloud.

Onboarding best practices

When creating a data input for the folders or organization in your GCP deployment, verify that a child folder or project in the same deployment has not yet already been configured in this input or any other input. This can result in data duplication.

Stages of onboarding

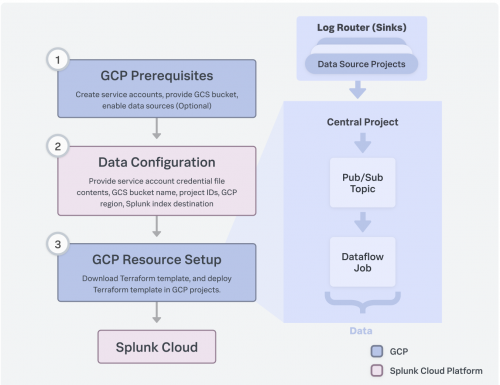

Data Manager walks you through various stages of onboarding your GCP accounts.

The onboarding steps are described in detail within Data Manager. The details are not duplicated here.

Onboard a GCP account

Onboarding a GCP account consists of the following stages:

- Configure the GCP prerequisites in the data account.

- Configure the data account, regions, and data sources.

- Create a data ingestion Terraform stack.

| Configure custom tags in Data Manager | About Terraform templates |

This documentation applies to the following versions of Data Manager: 1.7.0

Download manual

Download manual

Feedback submitted, thanks!