Example 1: Blue Coat proxy logs

This example shows how to create an add-on for Blue Coat web proxy logs from hosts running SGOS 5.3.3.8. (Earlier versions of Blue Coat require additional work to extract some of the required fields.)

Choose a folder name for the add-on

Name this add-on Splunk_TA-bluecoat. Create the add-on folder at $SPLUNK_HOME/etc/apps/Splunk_TA-bluecoat.

Step 1: Capture and index the data

To get started, set up a data input in order to get Blue Coat web proxy data into the Splunk App for Enterprise Security. Blue Coat submits logs via syslog over port UDP\514, so use a network-based data input. Since this add-on will not be able to determine the source type based on the content of the logs (because it is using network-based data input), the source type must be manually defined as "bluecoat".

Manually define the source type as "bluecoat".

Define a source type for the data

This add-on only handles one type of logs, so we only need a single source type, which we will name "bluecoat". We define a source type in default/props.conf of Splunk_TA-bluecoat:

[source::....bluecoat] sourcetype = bluecoat [bluecoat]

Handle timestamp recognition

Looking at the events, we see that Splunk successfully parses the date and time so there is no need to customize the timestamp recognition.

Configure line breaking

Each log message is separated by an end-line, therefore, we need to disable line merging to prevent multiple messages from being combined. Line merging is disabled by setting SHOULD_LINEMERGE to false in props.conf:

[source::....bluecoat] sourcetype = bluecoat [bluecoat] SHOULD_LINEMERGE = false

Step 2: Identify relevant IT security events

Next identify which Enterprise Security dashboards we want to display the Blue Coat web proxy data.

Understand your data and Enterprise Security dashboards

The data from the Blue Coat web proxy should go into the Proxy Center and the Proxy Search dashboards.

Step 3: Create field extractions and aliases

Now create the field extractions that will populate the fields according to the Common Information Model. Before we begin, restart Splunk so that it will recognize the add-on and source type defined in the previous steps.

Review the Common Information Model and the Dashboard Requirements Matrix and determine that the Blue Coat add-on needs to define the following fields to work with the Proxy Center and Proxy Search:

| Domain | Sub-Domain | Field Name | Data Type |

|---|---|---|---|

| Network Protection | Proxy | action | string |

| Network Protection | Proxy | status | int |

| Network Protection | Proxy | src | variable |

| Network Protection | Proxy | dest | variable |

| Network Protection | Proxy | http_content_type | string |

| Network Protection | Proxy | http_referer | string |

| Network Protection | Proxy | http_user_agent | string |

| Network Protection | Proxy | http_method | string |

| Network Protection | Proxy | user | string |

| Network Protection | Proxy | url | string |

| Network Protection | Proxy | vendor | string |

| Network Protection | Proxy | product | string |

Create extractions

Blue Coat data consists of fields separated by spaces. To parse this data we can use automatic key/value pair extraction and define the name of the fields using the associated location.

Start by analyzing the data and identifying the available fields. We see that the data contains a lot of duplicate or similar fields that describe activity between different devices. For clarity, create the following temporary naming convention to help characterize the fields:

| Panel | Description |

|---|---|

| s | Relates to the proxy server |

| c | Relates to the client requesting access through the proxy server |

| cs | Relates to the activity between the client and the proxy server |

| sc | Relates to the activity between the proxy server and the client |

| rs | Relates to the activity between the remote server and the proxy server |

Identify the existing fields and give them temporary names, listed here in the order in which they occur.

| 1 | date |

| 2 | time |

| 3 | c-ip |

| 4 | sc-bytes |

| 5 | time-taken |

| 6 | s-action |

| 7 | sc-status |

| 8 | rs-status |

| 9 | rs-bytes |

| 10 | cs-bytes |

| 11 | cs-auth-type |

| 12 | cs-username |

| 13 | sc-filter-result |

| 14 | cs-method |

| 15 | cs-host |

| 16 | cs-version |

| 17 | sr-bytes |

| 18 | cs-uri |

| 19 | cs(Referer) |

| 20 | rs(Content-Type) |

| 21 | cs(User-Agent) |

| 22 | cs(Cookie) |

Once we know what the fields are and what they contain, we can map the relevant fields to the fields required in the Common Information Model (CIM).

| Blue Coat Field | Field in CIM |

|---|---|

| date | date |

| time | time |

| c-ip | src |

| sc-bytes | bytes_in |

| time-taken | duration |

| s-action | action |

| sc-status | status |

| rs-status | N/A |

| rs-bytes | N/A |

| cs-bytes | bytes_out |

| cs-auth-type | N/A |

| cs-username | user |

| sc-filter-result | N/A |

| cs-method | http_method |

| cs-host | dest |

| cs-version | app_version |

| sr-bytes | sr_bytes |

| cs-uri | url |

| cs(Referer) | http_referet |

| rs(Content-Type) | http_content_type |

| cs(User-Agent) | http_user_agent |

| cs(Cookie) | http_cookie |

Next create the field extractions. Since the Blue Coat data is space-delimited, we start by setting the delimiter to the single space character. Then define the order of the field names. Add the following to defaults/transforms.conf in the Splunk_TA-bluecoat folder.

[auto_kv_for_bluecoat] DELIMS = " " FIELDS = date,time,src,bytes_in,duration,action,status,rs_status,rs_bytes, bytes_out,cs_auth_type,user,sc_filter_result,http_method,dest, app_version,sr_bytes,url,http_referrer,http_content_type, http_user_agent,http_cookie

- Where:

DELIMS: defines the delimiter between fields (in this case a space)

FIELDS: defines the fields in the order in which they occur

Add a reference to the name of the transform to default/props.conf in the Splunk_TA-bluecoat folder to enable it:

[bluecoat] SHOULD_LINEMERGE = false REPORT-0auto_kv_for_bluecoat = auto_kv_for_bluecoat

Now define the product and vendor. Since these fields are not included in the data, they will be populated using a lookup.

## props.conf REPORT-vendor_for_bluecoat = vendor_static_bluecoat REPORT-product_for_bluecoat = product_static_proxy ## transforms.conf [vendor_static_bluecoat] REGEX = (.) FORMAT = vendor::"Bluecoat" [product_static_proxy] REGEX = (.) FORMAT = product::"Proxy" ## bluecoat_vendor_info.csv sourcetype,vendor,product bluecoat,Bluecoat,Proxy

Note: Do not under any circumstances assign static strings to event fields as this will prevent these fields from being searched in Splunk. Instead, fields that do not exist should be mapped with a lookup. See "Static strings and event fields" in this document for more information.

Now we enable the transforms in default/props.conf using REPORT lines to call out the transforms.conf sections that will enable proper field extractions:

[bluecoat] SHOULD_LINEMERGE = false REPORT-0auto_kv_for_bluecoat = auto_kv_for_bluecoat REPORT-product_for_bluecoat = product_static_Proxy REPORT-vendor_for_bluecoat = vendor_static_Bluecoat

Generally, it is a good idea to add a field extraction that will process data that is uploaded as a file into Splunk directly. To do this, add a property to default/props.conf that indicates that any files ending with the extension bluecoat should be processed as Blue Coat data:

[source::.bluecoat] sourcetype = bluecoat [bluecoat] SHOULD_LINEMERGE = false REPORT-0auto_kv_for_bluecoat = auto_kv_for_bluecoat REPORT-product_for_bluecoat = product_static_Proxy REPORT-vendor_for_bluecoat = vendor_static_Bluecoat

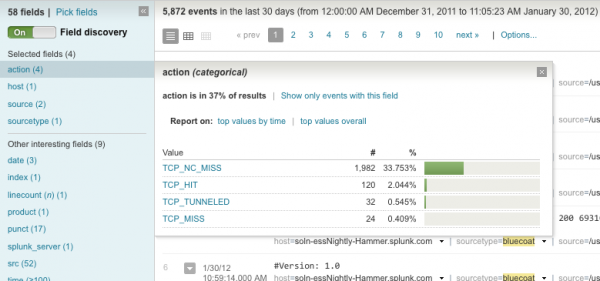

Verify field extractions

Now that the field extractions have been create, we need to verify that the extractions are working correctly. First, restart Splunk so that the changes can be applied. Next, search for the source type in the Search dashboard:

sourcetype="bluecoat"

When the results are displayed, select Pick Fields and choose the fields that ought to be populated. Once the fields are selected, click the field name to display a list of the values appear in this field:

Step 4: Create tags

We created the field extractions, now we need to add the tags.

Identify necessary tags

The Common Information Model requires that proxy data be tagged with "web" and "proxy":

| Domain | Sub-Domain | Macro | Tags |

|---|---|---|---|

| Network Protection | Proxy | proxy | web proxy |

Create tag properties

Now that we know what tags to create, we can create the tags.

First, we need to create an event type to which we can assign the tags. To do so, we create a stanza in default/eventtypes.conf that assigns an event type of bluecoat to data with the source type of bluecoat:

[bluecoat] search = sourcetype=bluecoat

Next, we assign the tags in default/tags.confin our add-on:

[eventtype=bluecoat] web = enabled proxy = enabled

Verify the tags

Now that the tags have been created, we can verify that they are being applied. We search for the source type in the search view:

sourcetype="bluecoat"

Use the field picker to display the field "tag::eventtype" at the bottom of each event. Review the entries and look for the tag statements at the bottom of the log message.

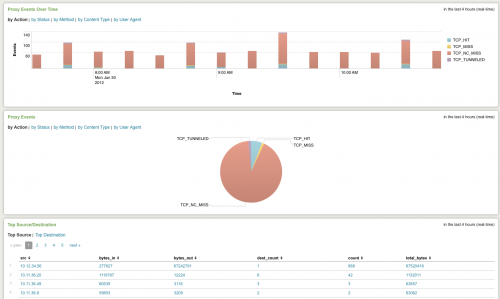

Check Enterprise Security dashboards

Now that the tags and field extractions are complete, the data should show up in the Splunk App for Enterprise Security. The extracted fields and the defined tags fit into the Proxy Center dashboard; therefore, the bluecoat data ought to be visible on this dashboard. However, the bluecoat data will not be immediately available since Enterprise Security uses summary indexing. It may take up to an hour after Splunk has been restarted for the data to appear. After an hour or so, the dashboard begins populating with Blue Coat data:

Step 5: Document and package the add-on

Packaging the add-on makes it easy to deploy to other Splunk installs in your organization, or to post to Splunkbase for use in other organizations. To package an add-on you only need to provide some simple documentation an compress the files.

Document add-on

Now that the add-on is complete, we create a README file under the root add-on directory:

===Blue Coat ProxySG add-on=== Author: John Doe Version/Date: 1.4 October 2012 Supported product(s): This add-on supports Blue Coat ProxySG 5.3.3.8 Source type(s): This add-on will process data that is source-typed as "bluecoat". Input requirements: N/A ===Using this add-on=== Configuration: Manual To use this add-on, manually configure the data input with the corresponding source type and it will optimize the data automatically.

Package the add-on

Package up the Blue Coat add-on by converting it into a zip archive named Splunk_TA-bluecoat.zip. To share the add-on, go to Splunkbase, click upload an app and follow the instructions for the upload.

| Generic example | Example 2: OSSEC |

This documentation applies to the following versions of Splunk® Enterprise Security: 3.0, 3.0.1

Download manual

Download manual

Feedback submitted, thanks!