Common Information Model

The Common Information Model (CIM) provides a standard method of parsing, categorizing, and normalizing data. Imported data must conform to the CIM in order for it to be properly reported and correlated.

The Splunk Common Information Model (CIM) is important for bringing custom data sources into the Splunk App for Enterprise Security. The CIM identifies which fields must be present in the data for the dashboards to work, and which tags need to be assigned to data in order to have the process work correctly. See Data Source Integration Manual in the Enterprise Security documentation and CIM in the core Splunk documentation for more specific information about how CIM is used when bringing data sources into Enterprise Security through add-ons.

Common Information Model overview

Why the Common Information Model (CIM) is Important

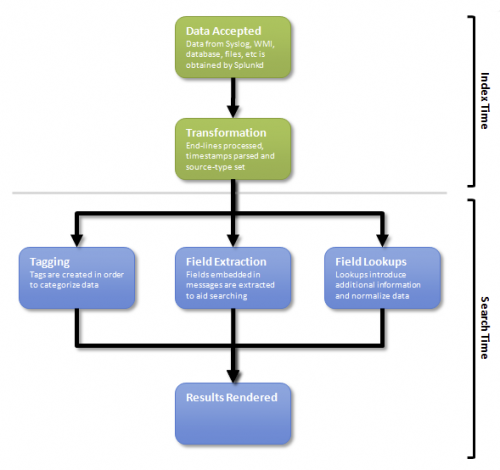

By default, Splunk provides powerful search capabilities for generic IT data. However, more advanced reporting and correlation requires that the data be normalized, categorized, and parsed.

Parsing

Unlike text-based search, the robust reporting capabilities of the Splunk App for Enterprise Security rely heavily on field extraction. Parsing occurs at index time during the transformation phase and at search time when field extraction is performed. Refer to "Understand and use the Common Information Model" and the fields list in the core Splunk procuct documentation for parsing additional data.

Categorizing

The Splunk App for Enterprise Security uses Splunk's event type and event type tagging facilities to categorize security data. The Splunk App for Enterprise Security searches are built using event types and tags to query for matching data. Refer to "Understand and use the Common Information Model" in the core Splunk product documentation.

Adding data sources that conform to the CIM

Follow the steps below to add new data sources that conform to the CIM:

- Setup data imports

- Set source types

- Setup field extractions

- Setup data normalization

- Setup tagging

Setup data imports

Creating a new data input

Data inputs can be added to Splunk using the Settings menu. Navigate to Settings > Apps to add or configure a add-on.

New data sources can also be configured manually by editing local/inputs.conf under the respective add-on (for example, $SPLUNK_HOME$/etc/apps/TA-nessus/local/inputs.conf).

Considerations when adding new inputs

When importing data make sure to consider:

- If events are multi-line then configure line-merging.

- If the data is encoded in an unusual character set then specify the encoding.

- If Splunk cannot interpret the timestamps correctly then set configure timestamp extraction.

See the Splunk Knowledge Manager Manual for more information on how to set up Splunk to accept new data or to learn about "What Splunk can index" and the types of data Splunk can import.

Set source types

Why source types are important

Correctly source typing data is important because the source type dictates how the data is processed in later steps. Failing to correctly set the source type will prevent fields from being extracted and the data from being properly normalized.

How to define source types

Source types are defined in the props.conf file (for example, $SPLUNK_HOME$/etc/apps/TA-nessus/local/props.conf) and must be referenced by transforms.conf file (for example, $SPLUNK_HOME$/etc/apps/TA-nessus/local/transforms.conf). At index time, Splunk applies the appropriate transforms that match the given data in order to determine the source type.

- If the data is only used for Enterprise Security and a single source type can be used, click Settings > Inputs > select the input type> select the input > set the source type.

- If the data Is to be be used by multiple apps, follow the instructions below:

- Edit

transforms.confto add sections that will match the data and set the source type. Add as many statements as necessary to match the various derivations of the data. - Edit

props.confto create the statements that will invoke the transforms for a given source. Note that a transforms statements can take multiple statements fromtransforms.conf. Splunk will stop on the first statement that matches, so the statements should be ordered from most specific to least specific.

- Edit

Extracting fields

Why field extractions are important

Field extractions are important for data normalization and advanced reporting. Normalization requires that the fields be properly extracted so that the name or value can be looked up and replaced with a standardized value. Additionally, advanced reporting requires that the fields be normalized for display in charts, tables, and graphs.

How to configure field extractions

Below are the steps necessary to configure field extractions:

- Analyze the logs to import and determine how the events are formatted (do they contain name/value pairs, are they separated by commas, and so on).

- Create regular expressions that can extract the data (these regular expressions will be used in the next step).

- Create field transformations (using the regular expression created in the previous step) to extract the data. Field transformations can be created using the field transformation editor in Enterprise Security (Settings > Fields > Field transformations > Add New) or by directly editing the

transforms.confunder the respective application (for example,$SPLUNK_HOME$/etc/apps/TA-nessus/local/transforms.conf). - Create the field extraction in order to apply the transformation to the data. Field extractions can be created using the extraction editor in Enterprise Security (Settings > Fields > Field extractions > Add New) or by creating REPORT statements in

props.conf.

Normalization

Events from different products and vendors are formatted in different ways, even if the events are semantically equivalent. The Splunk App for Enterprise Security's reports and correlation searches are designed to present a unified view of security across heterogeneous vendor data formats.

Unlike traditional approaches to doing so that are based on normalizing the data into a common schema at time of data collection, Splunk does so based on search-time mappings to a common set of field names and tags that can be defined at any time after the data is already captured, indexed, and available for ad hoc search. The Splunk App for Enterprise Security improves flexibility and reduces the risk of data loss by normalizing with late-binding searches instead of index-time parsing.

Refer to the Enterprise Security Data Source Integration Manual for more information about add-ons.

How lookups are used to normalize IT data

Lookups are used to normalize event data by replacing field names or values with standardized names and values. Lookups can replace field values (such as "severity=med" with "severity=medium") or field names (such as replacing "sev=high" with "severity=high"). The objective of normalization is to use the same names and values between equivalent events from different sources or vendors.

For example, NetScreen firewalls indicate a dropped packet with an action of Drop while a Cisco firewall may indicate the same event with an action of Deny. Additional firewall vendors may use "dropped", "block", "blocked", and so on.

The CIM requires that the action field for firewalls simply be either allowed or blocked. Normalizing this field greatly simplifies the ability to report on all firewall data (as opposed to reporting on it separately by vendor).

How to use lookups

Follow the steps below to configure lookups:

- Review the Use default fields information in the Knowledge Manager manual and determine what fields are necessary for the type of data you want to import.

- Identify the locations of equivalent fields in the data to import. Make sure that the fields you are going to use for the lookup are properly extracted. For example, it may be necessary to extract the field "action=Deny" as "orig_action=Deny" and then perform a list lookup to create a new field "action" with the normalized field (such as "action=blocked").

- Create the CSV lookup file that maps the original value to the value necessary per the Common Information Model. Upload the lookup through through Home > App Configuration > Lists and Lookups in Enterprise Security or by uploading to the relevant application directory directly on the file system (for example,

$SPLUNK_HOME$/etc/apps/TA-acme/lookups/actions.csv). - Create the lookup table definition using the configuration panel in Enterprise Security Home > App Configuration > Lists and Lookups> Add New or by editing

transforms.conf. - Create lookup statements using the Enterprise Security configuration panel (Home > App Configuration > Lists and Lookups > Add New) or by editing

props.conf. Below is the structure of the lookup statement:

Note: The OUTPUTNEW will cause the lookup to be applied only if none of the output fields exist already; use OUTPUT to overwrite existing values. Additional details about the LOOKUP operation can be found in the Splunk "props conf example" documentation.

Refer to the "About lookups" topic in the core Splunk product documentation for more information.

Tags

Why tagging is important

Tagging categorizes events so you can search for all related events regardless of source. For example, web-applications, VPN servers, and email servers all have authentication events yet the authentication events for each type of device are considerably different. Tagging all of the authentication related events appropriately makes them much easier to find and allows correlation searches to use the events automatically.

How to create tags

Tags are defined using tags.conf and eventtypes.conf (for example, $SPLUNK_HOME$/etc/apps/TA-nessus/default/tags.conf and $SPLUNK_HOME$/etc/apps/TA-nessus/default/eventtypes.conf respectively). The event types are used to identify the type of event and the tags in tags.conf key off of the event type to assign the tag.

To create a new tag, follow the steps below:

- Review the macro and tag reference in Enterprise Security (Resources > Common Information Model > Macro and Tag Reference) to determine what tags are necessary per the Common Information Model.

- Create the appropriate event types using the Event types manager in Enterprise Security (Resources > Common Information Model > Eventtype and Tag Reference) or by editing

eventtypes.confdirectly. - Create the appropriate tags in the Settings menu (Settings > Tags > List by tag name > Add New) or by editing

tags.confdirectly.

| Create an add-on | Out-of-the-box source types |

This documentation applies to the following versions of Splunk® Enterprise Security: 3.1, 3.1.1, 3.2, 3.2.1, 3.2.2, 3.3.0, 3.3.1, 3.3.2, 3.3.3

Download manual

Download manual

Feedback submitted, thanks!