Using the fit and apply commands

The Splunk Machine Learning Toolkit (MLTK) includes several custom machine learning search commands. These ML-SPL commands implement classic machine learning and statistical learning tasks. You can use these custom search commands on any Splunk platform instance where MLTK is installed.

The fit and apply commands train and fit a machine learning model based on the selected algorithm. At a high level the fit and apply commands operate as follows:

- The

fitcommand is used to produce a machine learning model based on the behavior of a set of events. - The

fitcommand applies the machine learning model to the current set of search results in the search pipeline. - The

applycommand is used to apply the machine learning model that was learned using the fit command. - The

applycommand repeats a selection of thefitcommand steps.

The fit and apply commands work on relative searches with relative time ranges, but will not complete on real-time searches.

Prerequisite

Before training your model, your data may require preprocessing. To learn about data preprocessing options, see Preparing your data for machine learning and Preprocessing your data using MLTK Assistants.

Steps of the fit command

The goal in the following example of fit in action is to predict the value of field_A based on the available data in the dataset. A prediction output is just one example of a machine learning outcome using the fit and apply commands.

The Splunk Machine Learning Toolkit performs the following steps when running the fit command:

- Search results pull into memory.

- Transform search results using data preparation actions:

- Discard any fields that are null throughout all the events.

- Discard non-numeric fields with more than (>) 100 distinct values.

- Discard events with any null fields.

- Convert non-numeric fields into dummy variables using one-hot encoding.

- Convert the prepared data into a numeric matrix and run the specified algorithm to create a model.

- Apply the model to the prepared data and produce new columns that display the prediction.

- The learned model is encoded and saved as a knowledge object.

The fit command modifies the model. The command is considered risky because running it can cause performance issues. As a result, this command triggers SPL safeguards. To learn more, see

Search commands for machine learning safeguards.

Search results pull into memory

When you run a search, the fit command pulls the search results into memory, creates a copy of the search results, and parses the search results into Pandas DataFrame format. The originally ingested data is not changed.

Transform search results using data preparation actions

The data must be properly prepared to be suitable for machine learning and running though the selected algorithm. The following actions all take place on the search results copy.

Discard any fields that are null throughout all the events

The fit command discards fields that contain no values.

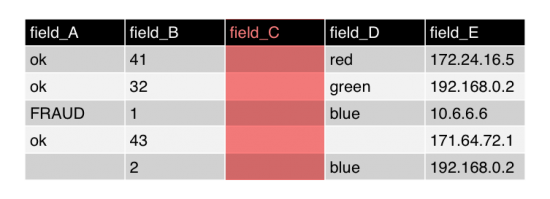

The following example shows a simplified visual representation of the search results. In this example, the fit command looks for incidents of fraud within the dataset. The column labeled field_C is highlighted for removal because there are no values in this field.

If you do not want null fields to be removed from the search results you must change your search. For example, to replace the null values with 0 in the results for field_C, use the SPL fillnull command. You must specify the fillnull command before the fit command, as shown in the following search example:

... | fillnull field_C | fit LogisticRegression field_A from field_*

Discard non-numeric fields with more than (>) 100 distinct values.

The fit command discards non-numeric fields if the fields have more than 100 distinct values. In machine learning, many algorithms do not perform well with high-cardinality fields, because every unique, non-numeric entry in a field becomes an independent feature. A high-cardinality field can lead to an explosion in feature space very quickly.

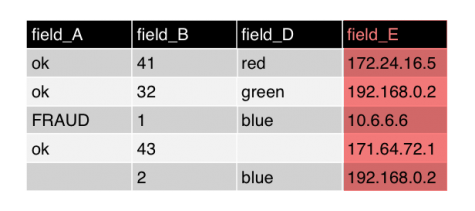

In MLTK, IP numbers are interpreted as non-numeric or string values. In this example, none of the fields have a non-numeric field with more than 100 distinct values, so no action is taken. Had the search results included more than 100 distinct Internet Protocol (IP) addresses in field_E it would qualify as high-cardinality.

An alternative to discarding fields is to use the values to generate a usable feature set. For example, by using SPL commands such as streamstats or eventstats, you can calculate the number of times an IP address occurs in your search results. You must generate these calculations in your search before the fit command. In this scenario the high-cardinality field is removed by the fit command, but the field that contains the generated calculations remains.

The limit for distinct values is set to 100 by default. You can change the limit by changing the max_distinct_cat_values attribute in your local copy of the mlspl.conf file. For details on updating the mlspl.conf file attribute, see Configure the fit and apply commands.

Only users with admin permissions can make changes to the mlspl.conf file. To learn more about changing .conf files, see How to edit a configuration file in the Splunk Admin Manual.

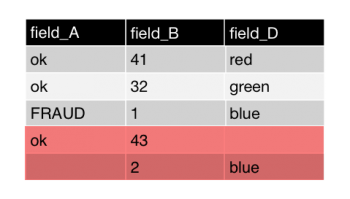

Discard events with any null fields

To train a model, the machine learning algorithm requires all search results have a value. Any null value means the entire event will not contribute towards the learned model. The fit command has already discarded any fields that are null throughout all of the events, and now the command drops every event (row) that has one or more null fields.

Rather than dropping every row with one or more null fields, you can specify that any search results with null values be included in the learned model. Choose to replace null values if you want the algorithm to learn from an example with a null value and to return an empty collection. Or choose to replace null values if you want the algorithm to learn from an example with a null value and to throw an exception.

To include the results with null values in the model, you must replace the null values before using the fit command in your search. You can replace null values by using SPL commands such as fillnull, filldown, or eval.

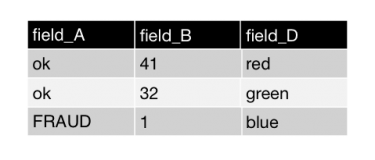

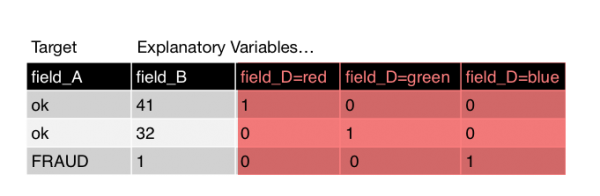

Convert non-numeric fields into dummy variables using one-hot encoding

The fit command converts fields that contain strings or characters into numbers. Algorithms perform best with numeric data, versus categorical data. The fit command converts non-numeric fields to binary indicator variables (1 or 0) using one-hot encoding.

One-hot encoding encodes categorical values as binary values (1 or 0). In this example the strings and characters in field_D get converted to three fields: field_D=red, field_D=green, field_D=blue. The following example shows the results of one-hot encoding. The values for these new fields are either 1 or 0. The value of 1 appears where the color name appeared previously.

If you want more than 100 values per field, you can use one-hot encoding with SPL commands before using the fit command. In the following example, SPL is used to code search results without limiting values to 100 values per field:

| eval {field_D}=1 | fillnull 0

Convert the prepared data into a numeric matrix and run the specified algorithm to create a model

The data is now in a clean, numeric matrix that's ready to be processed by the selected algorithm and trained to become the machine learning model. A temporary model is created in memory.

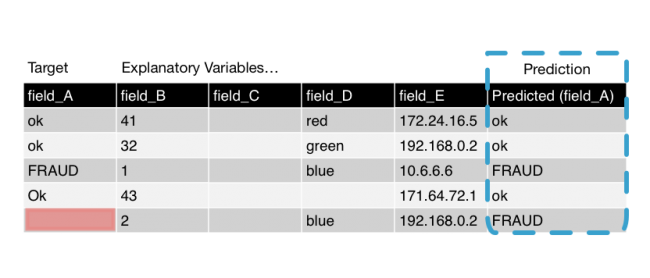

Apply the model to the prepared data and produce new columns that display the prediction

The fit command applies the temporary model to the prepared data. In this example, the model is applied to each search result to predict values, including the search results with null values. The fit command appends one or more columns to the results. The appended search results are then returned to the search pipeline.

The following image shows the original search results with the appended column. The name of the appended column is Predicted (field_A). This field contains predicted values for all of the results. In this example, although there is an empty field in our target column, a predicted result still generates. This works because the predicted value is generated from all the other available fields, not from the target field value.

The learned model is encoded and saved as a knowledge object

When the chosen algorithm supports saved models, and the into clause is included in the fit command, the learned model is saved as a knowledge object.

When the temporary model file is saved, it becomes a permanent model file. These permanent model files are sometimes referred to as learned models or encoded lookups. The learned model is saved on disk. The model follows all of the Splunk knowledge object rules, including permissions and bundle replication.

If the algorithm does not support saved models, or the into clause is not included, the temporary model is deleted.

Steps of the apply command

The goal in the following example of apply in action is to predict the value of field_A based on the available data in the dataset. A prediction output is just one example of a machine learning outcome using the fit and apply commands.

The apply command goes through a series of steps to re-convert data learned during fit. The apply command generally runs on a small slice of data that is different data than used for training the model with the fit command. The apply command generates new insight columns.

Coefficients created through the fit command and the resulting model artifact are already computed and saved, making the apply command fast to run. You can think of apply like a streaming command that's applied to data.

The Machine Learning Toolkit performs the following steps when running the apply command:

- Load the learned model.

- Transform search results using data preparation actions:

- Discard any fields that are null throughout all the events.

- Discard non-numeric fields with more than (>) 100 distinct values.

- Convert non-numeric fields into dummy variables using one-hot encoding.

- Discard dummy variables that are not present in the learned model.

- Replace missing dummy variables with zeros.

- Convert the prepared data into a numeric matrix.

- Apply the model to the prepared data and produce new columns that display the prediction.

Load the learned model

The learned model specified by the search command is loaded in memory. Normal knowledge object permission parameters apply. The following examples show the apply command loading the learned model:

...| apply temp_model

...| apply user_behavior_clusters

Transform search results using data preparation actions

The data must be properly prepared to be suitable for machine learning and running though the selected algorithm. The following actions all take place on the search results copy.

Discard any fields that are null throughout all the events

The apply command discards fields that contain no values.

Discard non-numeric fields with more than (>)100 distinct values

The apply command discards non-numeric fields if the fields have more than 100 distinct values.

The limit for distinct values is set to 100 by default. You can change the limit by changing the max_distinct_cat_values attribute in your local copy of the mlspl.conf file. For details on updating the mlspl.conf attributes, see Configure algorithm performance costs.

Only users with admin permissions can make changes to the mlspl.conf file. To learn more about changing .conf files, see How to edit a configuration file in the Splunk Admin Manual.

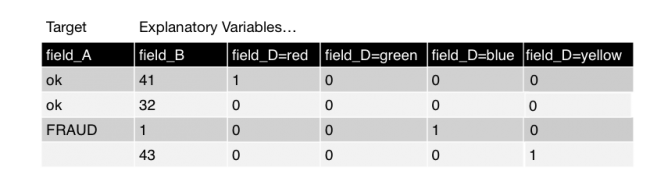

Convert non-numeric fields into dummy variables using one-hot encoding

The apply command converts fields that contain strings or characters into numbers. Algorithms perform better with numeric data, versus categorical data. The apply command converts non-numeric fields to binary indicator variables (1 or 0) using one-hot encoding.

When converting categorical variables, a new value might come up in the data. In this example, there is a new value for the color yellow. This new data requires the one-hot encoding step, which converts column D into a binary value (1 or 0). In the graphic below, there is a new column for field_D=yellow.

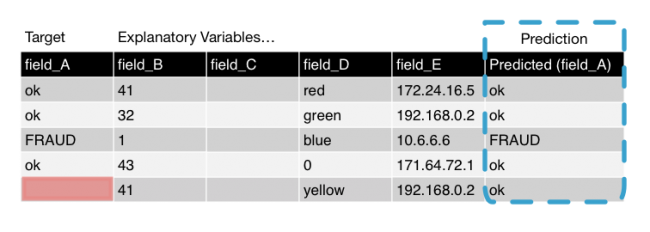

Discard dummy variables that are not present in the learned model

The apply command removes data that is not part of the learned (saved) model. The data for the color yellow did not appear during the fit process. As such, the column created in the convert non-numeric fields step is discarded.

Replace missing dummy variables with zeros

Any result with missing dummy variables are automatically filled with the value of 0 at this step. Replacing missing fields with 0 is a standard machine learning practice that's required in order for the algorithm to be applied.

Convert the prepared data into a numeric matrix

The data is now in a clean, numeric matrix. The model file is applied to this matrix and the results are calculated.

Apply the model to the prepared data and produce new columns that display the prediction

The apply command returns to the prepared data and adds the results columns to the search pipeline. In this example, although there is an empty field in our target column, a predicted result still generates. This works because the predicted value is generated from all the other available fields, not from the target field value.

See also

See the following resources for additional information:

- To learn about the other ML-SPL commands, see Search commands for machine learning.

- To learn about limiting access to ML-SPL commands, see Search commands for machine learning permissions.

- To learn about the available algorithms in MLTK, see Algorithms in the Splunk Machine Learning Toolkit.

| Search commands for machine learning permissions | Search macros in the Machine Learning Toolkit |

This documentation applies to the following versions of Splunk® Machine Learning Toolkit: 4.4.0, 4.4.1, 4.4.2, 4.5.0, 5.0.0, 5.1.0, 5.2.0, 5.2.1, 5.2.2, 5.3.0, 5.3.1, 5.3.3, 5.4.0, 5.4.1, 5.4.2

Download manual

Download manual

Feedback submitted, thanks!