Detect Numeric Outliers Experiment Assistant workflow

The Detect Numeric Outliers Experiment Assistant determines values that appear to be extraordinarily higher or lower than the rest of the data. Identified outliers are indicative of interesting, unusual, and possibly dangerous events.

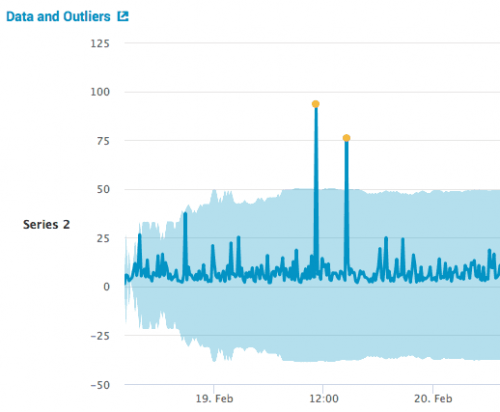

In the following visualization, the yellow dots indicate outliers.

Available algorithms

The Detect Numeric Outliers Assistant is compatible with the following distribution statistics:

- Standard deviation

- Median absolute deviation

- Interquartile range

Create an Experiment to detect numeric outliers

The Detect Numeric Outliers Experiment Assistant determines values that appear to be extraordinarily higher or lower than the rest of the data. Identified outliers are indicative of interesting, unusual, and possibly dangerous events. This Assistant is restricted to one numeric data field.

Input the data and select the parameters you want to investigate. When a situation violates the expectations for a parameter, it results in an outlier.

Assistant workflow

Follow these steps to create a Detect Numeric Outliers Experiment.

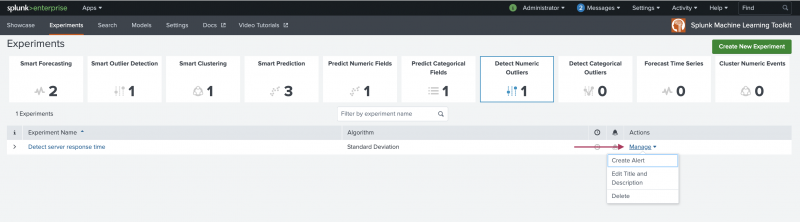

- From the MLTK navigation bar, click Experiments.

- If this is the first Experiment in MLTK, you will land on a display screen of all six Assistants. Select the Detect Numeric Outliers block.

- If you have at least one Experiment in MLTK, you will land on a list view of Experiments. Click the Create New Experiment button.

- Fill in an Experiment Title, and add a description. Both the name and description can be edited later if needed.

- Click Create.

- Run a search, and be sure to select a date range.

- In

Field to analyze, select a numeric field.

The list populates every time you run a search. - In

Threshold method, select a method.

In picking a method, consider both the distribution of the data, as well as how the method impacts outlier detection.

Use the following table to guide your decision.Method Application Standard Deviation This method is appropriate If your data exhibits a normal distribution. Since the standard deviation method centers on the mean, it is more impacted by outliers. Median Absolute Deviation This method applies a stricter interpretation of outliers than standard deviation because the measurement centers on the median and uses Median Absolute Deviation (MAD) instead of standard deviation. Interquartile Range This method is appropriate when your data exhibits an asymmetric distribution. Instead of centering the measurement on a mean or median, it uses quartiles to determine whether a value is an outlier. - Specify a value for the

Threshold multiplier.

The larger the number, the larger the outlier envelope, and therefore, the fewer the outliers. - (Optional) In the

Sliding windowfield, specify the number of values to use to compute each slice of the outlier envelope.

A sliding window is useful if the distribution of your data changes frequently. If you do not specify a sliding window, the Assistant uses the whole dataset which results in an outlier envelope of uniform size. - Select

Include current pointto include the current point in the calculations before assessing whether it is an outlier. - (Optional) In

Fields to split by, select up to 5 fields.

In the visualizations the data points are grouped by field, or if more than one split by field is specified, by the combination of the values of the fields. It is better to split by a categorical field than a numeric field. For example, if you detect outliers in grocery store purchases and analyze thequantityfield, you could split bystore_IDto group thequantitydata points by store. - (Optional) Add notes to this Experiment. Use this free form block of text to track the selections made in the Experiment parameter fields. Refer back to notes to review which parameter combinations yield the best results.

- Click Detect Outliers. The Experiment is now in a Draft state.

Draft versions allow you to alter settings without committing or overwriting a saved Experiment. An Experiment is not stored to Splunk until it is saved.

The following table explains the differences between a draft and saved Experiment:Action Draft Experiment Saved Experiment Create new record in Experiment history Yes No Run Experiment search jobs Yes No (As applicable) Save and update Experiment model No Yes (As applicable) Update all experiment alerts No Yes (As applicable) Update Experiment scheduled trainings No Yes

Interpret and validate results

After you detect outliers, review your results in the tables and visualizations. Results commonly have a few outliers. You can use the following methods to better evaluate the Experiment results:

Charts and Results Applications Data and Outliers Outliers, represented by yellow dots, are data points that fall outside of the light blue envelope. A chart to the right of the graph reports the total number of outliers. Hover over a yellow dot to see the value and quantity of an outlier. To learn more about the nature of the outlier, click it to drill down to the search query to see the base of the datapoint. Split by fields If you selected a field to split by, then the Data and Outliers chart displays up to 10 values that you can add or remove from the chart. The chart groups the data points based on split by field values. For example, if there are 3 split by field values, the chart will be broken out into 3 separate charts for each split value. The number of outliers for each split by field value is displayed to the right of the chart. Outlier Count Over Time chart This chart plots the outliers over time, and only appears if you use time series data. If you specify more than one split, the chart shows the outlier count for each field value. To see which values are too high or too low, check the box for Split outliers above and below threshold. If you split by field, each field contains a value for outliers above and below the threshold.Data Distribution histogram This histogram shows the distribution, and displays the number of data points within the threshold (the light blue area) and the number of data points outside the threshold. Data and Outliers table This table shows each outlier the corresponding value, as well as the the lists of values for any split by field. Outlier Split Value Distribution If you specified one or more split by fields, this table displays the number of outliers for each split value or combination of split values.

Save the Experiment

Once you are getting valuable results from your Experiment, save it. Saving your Experiment results in the following actions:

- Assistant settings saved as an Experiment knowledge object.

- The Draft version saves to the Experiment Listings page.

- Any affiliated scheduled trainings and alerts update to synchronize with the search SPL and trigger conditions.

You can load a saved Experiment by clicking the Experiment name.

Deploy the Experiment

Saved detect numeric outlier Experiments include options to manage, but not to publish.

In Experiments built using the Detect Numeric Outlier Assistant a model is not persisted, meaning you will not see an option to publish. However, you can achieve the same results as publishing the Experiment through the steps below for Outside the Experiment framework.

Within the Experiment framework

To manage your Experiment, perform the following steps:

- From the MLTK navigation bar, choose Experiments. A list of your saved Experiments populates.

- Click the Manage button available under the Actions column.

The toolkit supports the following Experiment management options

- Create and manage Experiment-level alerts. Choose from both Splunk platform standard trigger conditions, as well as from Machine Learning Conditions related to the Experiment.

- Edit the title and description of the Experiment.

- Delete an Experiment.

Updating a saved Experiment can affect affiliates alerts. Re-validate your alerts once you complete the changes. For more information about alerts, see Getting started with alerts in the Splunk Enterprise Alerting Manual.

Experiments are always stored under the user's namespace, so changing sharing settings and permissions on Experiments is not supported.

Outside the Experiment framework

- Click Open in Search to generate a New Search tab for this same dataset. This new search opens in a new browser tab, away from the Assistant.

This search query that uses all data, not just the training set. You can adjust the SPL directly and see the results immediately. You can also save the query as a Report, Dashboard Panel or Alert. - Click Show SPL to open a new modal window/ overlay showing the search query that you used to detect outliers. Copy the SPL to use in other aspects of your Splunk instance.

Learn more

To learn about implementing analytics and data science projects using Splunk's statistics, machine learning, and built-in custom visualization capabilities, see the Splunk Education course Splunk 8.0 for Analytics and Data Science.

| Predict Categorical Fields Experiment Assistant workflow | Detect Categorical Outliers Experiment Assistant workflow |

This documentation applies to the following versions of Splunk® Machine Learning Toolkit: 5.3.3, 5.4.0, 5.4.1, 5.4.2, 5.5.0, 5.6.0, 5.6.1

Download manual

Download manual

Feedback submitted, thanks!