Enrich data with lookups using Ingest Processor

You can enrich your data by adding relevant information using a lookup. A lookup is a knowledge object that matches the field-value combinations in your event data with field-value combinations in a lookup table, and then adds the relevant information from the lookup table to your events. By creating and applying a pipeline that uses a lookup, you can configure Ingest Processor to add more information to the received data before sending that data to a destination.

For example, assume that the following events represent purchases from the fictitious Buttercup Games store:

| date | ip_address | product_id |

|---|---|---|

| 2023-11-22 | 107.3.146.207 | WC-SH-G04 |

| 2023-11-22 | 128.241.220 | DC-SG-G02 |

| 2023-11-23 | 194.215.205.19 | FS-SG-G03 |

These events contain the date when the purchase happened, the IP address of the customer who made the purchase, and the product ID of the item that was purchased. If you have a lookup table that maps the product IDs to product names, you can use it in an Ingest Processor pipeline to add the corresponding product names to these events.

For more information about lookups, see About lookups in the Splunk Cloud Platform Knowledge Manager Manual.

Limitations

Be aware of the following differences in lookup support between the Ingest Processor solution and the Splunk platform:

- Ingest Processor supports CSV and KV Store lookups only. Geospatial and external lookups are not supported.

- The maximum file size for an uploaded lookups dataset in Ingest Processor is 200 MB.

- Ingest Processor parses all lookup data as strings. In order for you to match a field from your events with a field from a lookup table, the event field must be a string field.

- After updating lookup information in Splunk Cloud Platform, you must manually refresh the scpbridge connection to update the lookup datasets in the Ingest Processor tenant. For more information, see Update lookup datasets in this topic.

For example, an event field like http_status=200 is an integer field and cannot be matched with lookup fields. However, http_status="200" is a string field that can be matched with lookup fields. For more information about data types in SPL2, see Built-in data types in the SPL2 Search Manual.

Prerequisites

Before starting to configure lookups for Ingest Processor, you must have a lookup table stored in one of the following ways:

- In a CSV file. Make sure that the file meets the restrictions described in About the CSV files in the Splunk Cloud Platform Knowledge Manager Manual.

- In a KV Store collection on the Splunk Cloud Platform deployment that is pair-connected with your Ingest Processor tenant. Make sure that the KV Store collection meets the configuration requirements described in Special KV Store collection configuration for federated search in the Splunk Cloud Platform Knowledge Manager Manual.

The pair-connected Splunk Cloud Platform deployment is the deployment that was connected to the Ingest Processor service during the first-time set up process for the Ingest Processor solution. For more information, see First-time setup instructions for the Ingest Processor solution.

The instructions on this page refer to an example scenario using a lookup dataset named prices.csv. If you'd like to follow along with these example configurations, then complete these steps to get the prices.csv file:

- Download the

Prices.csv.zipfile. - Uncompress the

Prices.csv.zipfile. There is only one file in the ZIP file,prices.csv.

After meeting these prerequisites, perform the following steps to configure Ingest Processor to enrich incoming event data using lookups:

- Create a lookup in the pair-connected Splunk Cloud Platform deployment.

- Confirm the availability and namespace of the lookup dataset.

- Create a pipeline.

- Configure your pipeline to enrich event data using a lookup.

- Save and apply your pipeline.

Create a lookup in the pair-connected Splunk Cloud Platform deployment

Start by creating your lookup in the Splunk Cloud Platform deployment that is pair-connected with your Ingest Processor tenant. Doing this makes your lookup available as a lookup dataset in the tenant, which you can then import and use in Ingest Processor pipelines.

The Ingest Processor solution supports CSV lookups and KV Store lookups. To follow along with the example scenario described on this page, create a CSV lookup using the prices.csv file.

Creating CSV lookups for Ingest Processor

For detailed instructions on creating a CSV lookup, see Define a CSV lookup in Splunk Web in the Splunk Cloud Platform Knowledge Manager Manual. When creating a CSV lookup for use in Ingest Processor, do the following:

- In Splunk Cloud Platform, upload the .csv or .csv.gz file containing your lookup table.

- (Optional) If you don't want other users to be able to see all of the contents of your lookup table, you can create a restricted view of the table by creating a lookup definition.

- Update the permissions associated with the CSV file or the lookup definition. The file or definition must be available to all apps, your Splunk Cloud Platform user account, and the service account used in the scpbridge connection that connects the Splunk Cloud Platform deployment with the Ingest Processor tenant.

- Set the Object should appear in option to All apps (system).

- Make sure that Read permission for the file or definition is available to a role that is associated with your Splunk Cloud Platform user account.

- Make sure that Read permission is also available to the role used by the service account. Typically, the name of this role is scp_user, if you used the role name suggested in Create a role for the service account during the initial setup of the Ingest Processor solution.

- Make sure that a role that is associated with your user account and the role used by the service account both have Read permission for the Destination app that is associated with the CSV file or lookup definition.

- Select Apps, then select Manage Apps.

- Find the app that your CSV file or lookup definition is associated with, and then select Permissions.

- Select Read permission for the necessary roles, and then select Save.

Creating KV Store lookups for Ingest Processor

For detailed instructions on creating a KV Store lookup, see Define a KV Store lookup in Splunk Web in the Splunk Cloud Platform Knowledge Manager Manual. When creating a KV Store lookup for use in Ingest Processor, do the following:

- Create a lookup definition for your KV Store collection.

- Update the permissions associated with the lookup definition. The definition must be available to all apps, your Splunk Cloud Platform user account, and the service account used in the scpbridge connection that connects the Splunk Cloud Platform deployment with the Ingest Processor tenant.

- Set the Object should appear in option to All apps (system).

- Make sure that Read permission for this definition is available to a role that is associated with your Splunk Cloud Platform user account.

- Make sure that Read permission is also available to the role used by the service account. Typically, the name of this role is scp_user, if you used the role name suggested in Create a role for the service account during the initial setup of the Ingest Processor solution.

- Make sure that a role that is associated with your user account and the role used by the service account both have Read permission for the Destination app that is associated with the lookup definition.

- Select Apps, then select Manage Apps.

- Find the app that your lookup definition is associated with, and then select Permissions.

- Select Read permission for the necessary roles, and then select Save.

Confirm the availability of the lookup dataset

After creating your lookup in the pair-connected Splunk Cloud Platform deployment, confirm that the lookup is available as a dataset in your Ingest Processor tenant.

- Log in to your Ingest Processor tenant.

- Refresh the scpbridge connection by doing the following:

- Select the Settings icon (

) and then select System connections.

) and then select System connections. - On the scpbridge connection, select the Refresh icon (

).

). - Navigate to the Datasets page and find your lookup dataset. The dataset has the same name as the CSV file or the lookup definition. If the Datasets page does not show your lookup dataset, then there might be a permissions error preventing the scpbridge connection from accessing the dataset. Verify that the role used by the service account for the scpbridge connection has read permission for your lookup table or definition. See Create a lookup in the pair-connected Splunk Cloud Platform deployment on this page for more information.

- Select your lookup dataset, and then select the Open in Search icon (

).

). - In the Search page, select the Run icon (

).

If the search results pane displays information from your lookup dataset, then the lookup dataset is available in your tenant and ready to be used in pipelines. If the search results pane displays an error or 0 results, then there might be a permissions error preventing you from fully accessing the lookup dataset. In Splunk Cloud Platform, verify that your user account has read permission for your lookup table or definition. See Create a lookup in the pair-connected Splunk Cloud Platform deployment on this page for more information.

).

If the search results pane displays information from your lookup dataset, then the lookup dataset is available in your tenant and ready to be used in pipelines. If the search results pane displays an error or 0 results, then there might be a permissions error preventing you from fully accessing the lookup dataset. In Splunk Cloud Platform, verify that your user account has read permission for your lookup table or definition. See Create a lookup in the pair-connected Splunk Cloud Platform deployment on this page for more information.

You now have a lookup dataset that you can use to enrich events in an Ingest Processor pipeline. Next, start creating the pipeline.

Create a pipeline

- Navigate to the Pipelines page, then select New pipeline, and then Ingest Processor pipeline.

- On the Get started page, select Blank pipeline, and then Next.

- On the Define your pipeline's partition page, specify a subset of the data received by the Ingest Processor for this pipeline to process. If you want to use the sample data given in step 4 so that you can follow along with the example configurations described in later sections of this page, skip this step. Otherwise, to define a partition, complete these steps:

- Select the plus icon (

) next to Partition, or select the option that matches how you would like to create your partition in the Suggestions section.

) next to Partition, or select the option that matches how you would like to create your partition in the Suggestions section. - In the Field field, specify the event field that you want the partitioning condition to be based on.

- To specify whether the pipeline includes or excludes the data that meets the criteria, select Keep or Remove.

- In the Operator field, select an operator for the partitioning condition.

- In the Value field, enter the value that your partition should filter by to create the subset.

- Select Apply.

- Once you have defined your partition, select Next.

- (Optional) On the Add sample data page, enter or upload sample data for generating previews that show how your pipeline processes data.

- Select Paste or upload sample data.

- Select CSV and then enter the following sample events, which represent three fictitious purchases made from a store website:

- Select Next to confirm your sample data.

- On the Select a metrics destination page, select the name of the destination that you want to send metrics to.

- (Optional) If you selected Splunk Metrics store as your metrics destination, specify the name of the target metrics index where you want to send your metrics.

- On the Select destination dataset page, select the name of the destination that you want to send data to. Then, do one of the following:

- If you selected a Splunk platform destination, select Next.

- If you selected another type of destination, select Done and skip the next step.

- (Optional) If you're sending data to a Splunk platform destination, you can specify a target index:

- In the Index name field, select the name of the index that you want to send your data to.

- (Optional) In some cases, incoming data already specifies a target index. If you want your Index name selection to override previous target index settings, then select the Overwrite previously specified target index check box.

- Select Done.

You can create more conditions for a partition in a pipeline by selecting the plus icon (![]() ).

).

The sample data must be in the same format as the actual data that you want to process. See Getting sample data for previewing data transformations for more information.

If you want to follow the configuration examples in the next section, then do the following:

date,ip_address,product_id 2023-11-22,107.3.146.207,WC-SH-G04 2023-11-22,128.241.220.82,DC-SG-G02 2023-11-23,194.215.205.19,FS-SG-G03

Be aware that the destination index is determined by a precedence order of configurations. See How does Ingest Processor know which index to send data to? for more information.

You now have a simple pipeline that receives data and sends that data to a destination. In the next section, you'll configure this pipeline to enrich your data using information from your lookup dataset.

Configure your pipeline to enrich event data using a lookup

Add an Enrich events with lookup action to your pipeline. Configure this action to specify how the pipeline matches field-value combinations in the incoming event data with field-value combinations in a lookup dataset and adds information from that dataset to the events.

- (Optional) Select the Preview Pipeline icon (

) to generate a preview that shows what the sample data looks like when it passes through the pipeline.

) to generate a preview that shows what the sample data looks like when it passes through the pipeline. - Select the plus icon (

) in the Actions section, and then select Enrich events with lookup.

) in the Actions section, and then select Enrich events with lookup. - Open the Lookup dataset menu, select the lookup dataset that you want to use, and then select Select lookup dataset.

- In the Match fields area, define one or more pairs of lookup fields and event fields that you want to match. When these matched fields contain identical values, the pipeline adds data from the lookup dataset to the event.

- In the Output fields area, select the fields from the lookup dataset that you want to add to your events. you can choose to add all the fields from the dataset by selecting Output all fields. For example, to add product names from the prices.csv lookup dataset to the sample events, select product_name.

- (Optional) Specify what action to take when an incoming event already contains the selected output fields:

- To replace the data in the event with the data from the lookup dataset, select Overwrite existing values in events. This setting is selected by default.

- To leave the existing data in the event unchanged, and only fill in empty or missing fields using data from the lookup dataset, deselect Overwrite existing values in events.

- To confirm the configuration of your lookups, select Apply.

- The

importcommand imports the lookup dataset into the pipeline so that the pipeline can use it. See Importing datasets into Ingest Processor pipelines for more information. - The

lookupcommand matches fields from the lookup dataset with fields from the incoming events, and then enriches the events by adding the specified output fields to the event. See lookup command overview in the SPL2 Search Manual for more information. - Imports the prices.csv lookup dataset.

- Matches the

product_idfield in incoming events with theproductIdfield in the prices.csv dataset. - Adds the corresponding

product_namevalues from the prices.csv datset into incoming events, overwriting anyproduct_namevalues that might already exist in the events.

If you used the sample events described in the previous section, then the preview results panel displays the following:

| date | ip_address | product_id |

|---|---|---|

| 2023-11-22 | 107.3.146.207 | WC-SH-G04 |

| 2023-11-22 | 128.241.220 | DC-SG-G02 |

| 2023-11-23 | 194.215.205.19 | FS-SG-G03 |

For example, if you created a lookup dataset using the prices.csv file, then select prices.csv.

The Enrich events with lookups dialog box loads the information from your selected lookup dataset.

For example, the product_id field from the sample data and the productId field from the prices.csv lookup dataset both contain product ID values used by the fictitious Buttercup Games store. If you match these fields, then whenever the pipeline receives an event that has WC-SH-G04 as a product_id value, the pipeline will update that event to include data from the lookup dataset row that has WC-SH-G04 as a productId value. To match these fields, configure the following settings in the Match fields area:

| Option name | Enter or select the following |

|---|---|

| Lookup field | productId |

| Event field | product_id |

For example, consider if the pipeline receives an event that erroneously has WC-SH-G04 as a product_id value and Pony Run as a product_name value. According to the prices.csv dataset, the WC-SH-G04 product ID corresponds to the World of Cheese product name. When Overwrite existing values in events is selected, the pipeline changes the product_name value in the event from Pony Run to World of Cheese. When that option is not selected, the pipeline does not change the product_name value in the event.

The pipeline editor adds an import statement and a lookup command to your pipeline.

For example, this pipeline does the following:

import 'prices.csv' from /envs.splunk.buttercup.lookups $pipeline = | from $source | lookup 'prices.csv' productId AS product_id OUTPUT product_name | into $destination;

If you preview your pipeline again, the preview results panel displays the following:

| date | ip_address | product_id | product_name |

|---|---|---|---|

| 2023-11-22 | 107.3.146.207 | WC-SH-G04 | World of Cheese |

| 2023-11-22 | 128.241.220 | DC-SG-G02 | Dream Crusher |

| 2023-11-23 | 194.215.205.19 | FS-SG-G03 | Final Sequel |

You now have a pipeline that enriches the incoming data with additional information from a lookup dataset. In the next section, you'll save this pipeline and apply it to Ingest Processor.

Save and apply your pipeline

Be aware that when you apply a pipeline that uses a lookup or add a lookup to an applied pipeline, it can take some time for Ingest Processor to download and start using your lookup table. For example, a 200 MB lookup table takes approximately 10 minutes to download.

This download time does not disrupt data processing when you're adding a lookup to a pipeline that is already applied to Ingest Processor. However, when you initially apply a lookup pipeline to Ingest Processor, that pipeline does not start receiving or processing data until after the download is complete.

- To save your pipeline, do the following:

- Select Save pipeline.

- In the Name field, enter a name for your pipeline.

- (Optional) In the Description field, enter a description for your pipeline.

- Select Save.

- In the Apply pipeline prompt, select Yes, apply.

It can take a few minutes for the Ingest Processor service to finish applying your pipeline to an Ingest Processor. If you uploaded a new lookups dataset to the Ingest Processor to use in your pipeline, the Ingest Processor downloads the dataset before applying the pipeline. During this time, the Ingest Processor enters the pending state (![]() ) To confirm that the process completed successfully, navigate to the Pipelines page. Then, verify that the Applied column for the pipeline contains a The pipeline is applied icon (

) To confirm that the process completed successfully, navigate to the Pipelines page. Then, verify that the Applied column for the pipeline contains a The pipeline is applied icon (![]() ).

).

The Ingest Processor can now enrich the event data that it receives by adding information from the lookup dataset. For information on how to confirm that your data is being processed and routed as expected, see Verify your Ingest Processor and pipeline configurations.

Update lookup datasets

The information in the lookup datasets comes from the pair-connected Splunk Cloud Platform deployment. To update a lookup dataset that your Ingest Processor is using to enrich data, start by updating the lookup table or definition in Splunk Cloud Platform. Then, synchronize that updated information between Splunk Cloud Platform and the Ingest Processor.

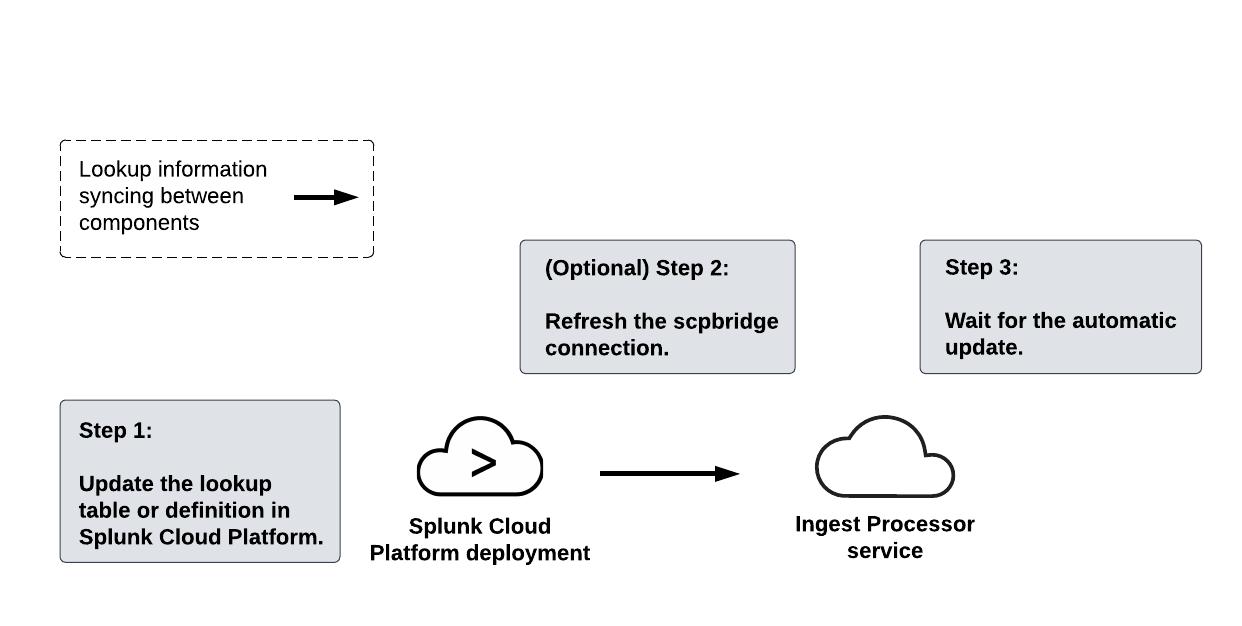

The following diagram summarizes the steps for updating a lookup dataset:

To update a lookup dataset, complete these steps:

- In the pair-connected Splunk Cloud Platform deployment, update the information in the lookup table or definition corresponding to the lookup dataset.

- Send this updated information from the Splunk Cloud Platform deployment to the Ingest Processor service by refreshing the scpbridge connection:

- Select the Settings icon (

) and then select System connections.

) and then select System connections. - On the scpbridge connection, select the Refresh icon (

).

). - Wait for the Ingest Processor service to send the updated information to all the applied pipelines that are configured to use the lookup dataset.

The Ingest Processor service automatically updates the lookup datasets used by applied pipelines every 4 hours. Additionally, if the lookup configuration in the pipeline is modified, then the Ingest Processor will download the updated lookup dataset.

Performance benchmarks for lookups in Ingest Processor

These are benchmarks for expected performance but are not guaranteed.

When you apply a pipeline that uses a lookup or add a lookup to an applied pipeline, it can take some time for Ingest Processor to download and start using the lookup table. It takes approximately 10 minutes to download a 200 MB lookup table.

This download time does not disrupt data processing when you're adding a lookup to a pipeline that is already applied to Ingest Processor. However, when you initially apply a lookup pipeline to Ingest Processor, that pipeline does not start receiving or processing data until after the download is complete.

This benchmark was determined based on performance testing with these configurations:

- A pipeline that uses the

rexcommand to extract 1 event, and then uses thelookupcommand to match 1 existing field and add 1 new field. - A lookup table that contains 6 columns and 3 million rows. The file size of this table is 200 MB.

This table was used in performance testing specifically. Ingest Processor has also been tested using lookup tables that contain up to 20 columns.

| Hash fields using Ingest Processor | Extract fields from event data using Ingest Processor |

This documentation applies to the following versions of Splunk Cloud Platform™: 9.1.2308, 9.1.2312, 9.2.2403, 9.2.2406, 9.3.2408, 9.3.2411 (latest FedRAMP release)

Download manual

Download manual

Feedback submitted, thanks!