Configure CollectD to send data to the Splunk Add-on for Linux

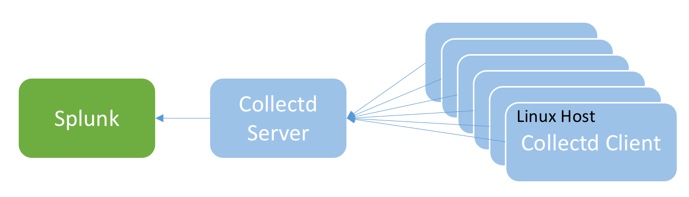

The Splunk Add-on for Linux depends on data sent from CollectD to the Splunk HTTP Event Collector (HEC) or a TCP input. CollectD is a daemon which includes a rich set of plugins for gathering system and application performance metrics. The following picture illustrates how CollectD gathers data from the Linux host (as CollectD client) and sends data to Splunk (as CollectD server).

You can customize your CollectD deployment based on your needs and environment. You can configure the CollectD client and CollectD server on the same Linux host, or you can configure several CollectD clients to send data to a single CollectD server.

Download and install CollectD

Prerequisites

Review the hardware and software requirements for the Splunk Add-on for Linux. See Hardware and software requirements.

Steps

- Go to https://collectd.org/download.shtml to download CollectD.

- Follow the instructions from https://collectd.org/wiki/index.php/First_steps to install CollectD.

Configure CollectD for Linux

You must configure CollectD to collect data and send the data to Splunk. The default location for collectd.conf is /etc/collectd.conf or /etc/collectd/collectd.conf.

See the CollectD manpage to learn more about collectd.conf.

Configure CollectD client to collect data from Linux

| Data Collected | Plugin in CollectD | Configuration Suggestion |

|---|---|---|

| CPU metrics | Plugin CPU

Enable the plugin just by deleting the hash-symbol (#) in front of the plugin. For example, change the syntax |

<Plugin cpu> # ReportByCpu true # ReportByState true ValuesPercentage true </Plugin> |

| Memory metrics | Plugin memory | <Plugin memory> ValuesAbsolute true ValuesPercentage true </Plugin> |

| Swap metrics | Plugin swap | <Plugin swap> ReportByDevice true # ReportBytes true # ValuesAbsolute true ValuesPercentage true </Plugin> |

| VMEM metrics | Plugin vmem |

<Plugin vmem> Verbose false </Plugin> |

| Mountpoint usage/FS usage | Plugin df |

<Plugin df>

# Device "/dev/hda1"

# Device "192.168.0.2:/mnt/nfs"

# MountPoint "/home"

# FSType "ext3"

ReportByDevice true

# ReportInodes false

# ValuesAbsolute true

ValuesPercentage true

</Plugin>

|

| Network interface traffic | Plugin interface | None. Use the default configuration. |

| Disk utilization | Plugin disk | |

| System load | Plugin load | <Plugin load> ReportRelative true </Plugin> |

| Process information | Plugin processes | <Plugin processes> ProcessMatch "all" "(.*)" </Plugin> |

| Network protocols information | Plugin protocols | None. Use the default configuration. |

| IRQ metrics | Plugin irq | |

| TCP connections information | Plugin tcpconns | |

| Thermal information | Plugin thermal | |

| System uptime statistics | Plugin uptime |

Configure the CollectD client to send data to the CollectD server

If you configure the CollectD client and the CollectD server on the same machine, you can skip this step.

See Plugin network in the CollectD manpage for information on how to configure the Plugin network. See Networking introduction on the CollectD Wiki for a detailed walkthrough.

Configure the CollectD server to send data to Splunk

Plugin write_http and Plugin write_graphite submit values to Splunk. Plugin write_http sends data via HTTP and encoding metrics with JSON, and Plugin write_graphite writes data to Graphite via TCP.

Configure plugin write_http

If you want to send Linux performance metrics data to Splunk in JSON format via HTTP, configure URL, Header and Format as follows:

| Field name | Description | Syntax | Example |

|---|---|---|---|

| URL | URL to which the values are submitted to.

The values for |

URL "https://Splunk Server IP:Port Number/services/collector/raw?channel=Token Value" | URL "https://10.66.104.127:8088/services/collector/raw?channel=693E90D4-91A5-49A3-99B1-CFE8828A0711" |

| Header | A HTTP header to add to the request. | Header "Authorization: Splunk Token Value" | Header "Authorization: Splunk 693E90D4-91A5-49A3-99B1-CFE8828A0711" |

| Format | The data format. | Format "JSON" | Format "JSON" |

Example

LoadPlugin write_http

<Plugin write_http>

<Node "node-http-1">

URL "https://10.66.104.127:8088/services/collector/raw?channel=693E90D4-91A5-49A3-99B1-CFE8828A0711"

Header "Authorization: Splunk 693E90D4-91A5-49A3-99B1-CFE8828A0711"

Format "JSON"

Metrics true

StoreRates true

</Node>

</Plugin>

Configure plugin write_graphite

If you want to send Linux performance metrics data to Splunk in Graphite format, configure plugin write_graphite as follows:

- Set

AlwaysAppendDStotrue. - Set

SeparateInstancestofalse. - Make sure the values for

HostandPortare the same as the values you define for the TCP inputs. See Configure TCP inputs for the Splunk Add-on for Linux.

If dots (.) are used in the metric name (including prefix, EscapeCharacter, hostname, and postfix), Splunk cannot recognize the key-value pair in the data.

Example

LoadPlugin write_graphite

<Plugin write_graphite>

<Node "node-graphite-1">

Host "10.66.108.127"

Port "2104"

Protocol "tcp"

EscapeCharacter "_"

AlwaysAppendDS true

SeparateInstances false

</Node>

</Plugin>

| Install the Splunk Add-on for Linux | Configure HEC inputs for the Splunk Add-on for Linux |

This documentation applies to the following versions of Splunk® Supported Add-ons: released

Download manual

Download manual

Feedback submitted, thanks!