Predict Numeric Fields Classic Assistant workflow

Classic Assistants enable machine learning through a guided user interface. The Predict Numeric Fields Classic Assistant uses regression algorithms to predict numeric values. Such models are useful for determining to what extent certain peripheral factors contribute to a particular metric result. After the Classic Assistant computes the regression model, you can use these peripheral values to make a prediction on the metric result.

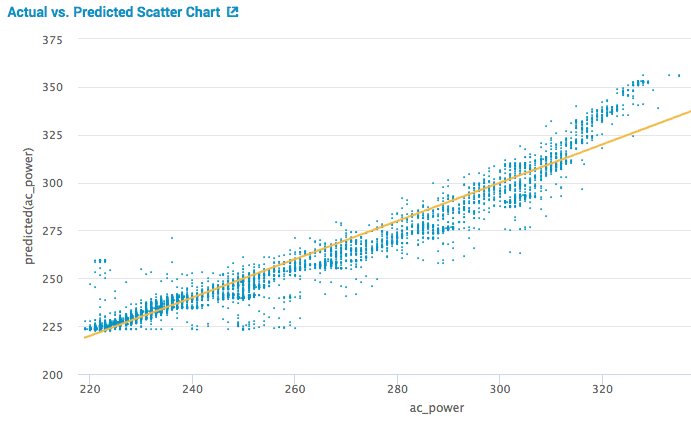

The following visualization illustrates a scatter plot of the actual versus predicted results. This visualization is from the Showcase example for Server Power Consumption.

Algorithms

The Predict Numeric Fields Classic Assistant uses the following regression algorithms to predict numeric values:

Create a model to predict a numeric field

Before you begin

- The Predict Numeric Fields Assistant offers the option to preprocess your data. For more information on Assistant-based preprocessing algorithms, see Preprocessing machine data using Assistants.

- The MLTK default selects the Linear Regression algorithm. Use this default if you aren't sure which algorithm is best for you. For further details on any algorithm, see Algorithms in the Machine Learning Toolkit.

Workflow

Follow these steps for the Predict Numeric Fields Classic Assistant.

- From the MLTK navigation bar select Classic > Assistants > Predict Numeric Fields.

- Run a search, and be sure to select a date range.

- (Optional) Click + Add a step to add preprocessing steps.

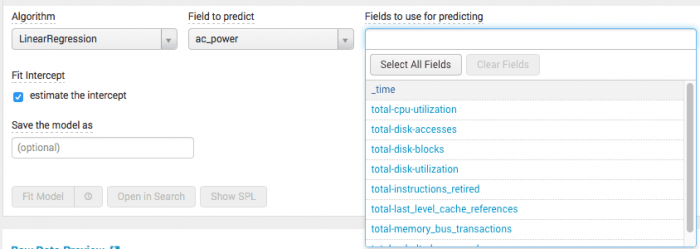

- Select an algorithm from the

Algorithmdrop-down menu. - Select a target field from the drop-down menu

Field to predict.

When you selectField to predict, theFields to use for predictingdrop-down populates with available fields to include in your model. - Select a combination of fields from the drop-down menu

Fields to use for predicting. As seen below in the server power showcase example, the drop-down menu contains a list of all the possible fields used to predict ac_power using the linear regression algorithm.

- Split your data into training and testing data. The default split is 50/50, and the data is divided randomly into two groups.

The algorithm selected determines the fields available to build your model. Hover over any field name to get more information about that field.

- Type the name the model in

Save the model asfield.

You must specify a name for the model in order to fit a model on a schedule or schedule an alert. - Click Fit Model.

Interpret and validate results

After you fit the model, review the prediction results and visualizations to see how well the model predicted the numeric field. You can use the following methods to evaluate your predictions:

| Charts and Results | Applications |

|---|---|

| Actual vs. Predicted Scatter Chart | This visualization plots the predicted value against the actual values for the predicted field. The closer the points are to the line, the better the model. Hover over the blue dots to see the actual values. |

| Residuals Histogram | This visualization shows the difference between the actual values and the predicted values. Hover over the predicted values (blue bars) to see the number of residual errors and the sample count values. Residuals commonly end on a bell curve clustered tightly around zero. |

| R2 Statistic | This statistic shows how well the model explains the variability of the result. 100% (a value of 1) means the model fits perfectly. The closer the value is to 1, the better the result. |

| Root Mean Squared Error | This chart shows the variability of the result, which is the standard deviation of the residual. The formula takes the difference between the actual and predicted values, squares this value, takes an average, and then takes a square root. This value can be arbitrarily large and gives you an idea of how close or far the model is. These values only make sense within one dataset and shouldn't be compared across datasets. |

| Fit Model Parameters Summary | This summary displays the coefficients associated with each variable in the regression model. A relatively high coefficient value shows a high association of that variable with the result. A negative value shows a negative correlation. |

| Actual vs. Predicted Overlay | This overlay shows the actual values against the predicted values in sequence. |

| Residuals | The residuals show the difference between predicted and actual values in sequence. |

Refine the model

After you validate the model, refine the model and run the fit command again.

Consider trying the following:

- Reduce the number of fields selected in the

Fields to use for predictingdrop-down menu. Having too many fields can generate a distraction. - Bring in new data sources to enrich your modeling space.

- Build features on raw data, model on behaviors of the data instead of raw data points, using SPL. Streamstats, eventstats, etc.

- Check your fields - are you using categorical values correctly? For example are you using DayOfWeek as a number (0 to 6) instead of "Monday", "Tuesday" , etc ? Make sure you have the right type of value.

- Bring in context via lookups - holidays, external anomalies, etc.

- Increase the number of fields ( from additional data, feature building as above,etc) selected in the

Fields to use for predictingdrop-down menu.

Deploy the model

After you validate and refine the model you can deploy the model.

Within the Classic Assistant framework

- Click the Schedule Training button to the right of Fit Model to schedule model training.

This opens a new modal window/ overlay with fields to fill out including Report title, time range and trigger actions. You can set up a regular interval to fit the model.

Outside the Classic Assistant framework

- Click Open in Search to generate a New Search tab for this same dataset. This new search opens in a new browser tab, away from the Classic Assistant.

This search query that uses all data, not just the training set. You can adjust the SPL directly and see results immediately. You can also save the query as a Report, Dashboard Panel or Alert. - Click Show SPL to generate a new modal window/ overlay showing the search query you used to fit the model. Copy the SPL to use in other aspects of your Splunk instance.

- Click Schedule Alert to set up a triggered alert when the predicted value meets a threshold you specify.

Once you navigate away from the Classic Assistant page, you cannot return to it through the Classic or Models tabs. Classic Assistants are great for generating SPL, but may not be ideal for longer-term projects.

For more information about alerts, see Getting started with alerts in the Splunk Enterprise Alerting Manual.

| Cluster Numeric Events Experiment Assistant workflow | Predict Categorical Fields Classic Assistant workflow |

This documentation applies to the following versions of Splunk® Machine Learning Toolkit: 4.4.0, 4.4.1, 4.4.2, 4.5.0, 5.0.0, 5.1.0, 5.2.0, 5.2.1, 5.2.2, 5.3.0, 5.3.1

Download manual

Download manual

Feedback submitted, thanks!