How workload management works

This page provides an overview of workload management concepts and features.

To use workload management, you create groups of CPU and memory resources called workload pools. You then create workload rules that automatically place your searches in specific workload pools. You can also create workload rules to monitor search runtime and perform automated remediation actions.

For example, you can create a workload rule that places searches from the security team in a high-priority workload pool, and create another rule to move those searches to a standard pool if the search runtime exceeds 2 minutes.

Workload rules are evaluated on the search head, and searches are placed in pools based on the defined workload rules. Searches run in the same search pool on the search head and on all indexers. If a search pool exists on a search head but does not exist on an indexer, the search runs in the default search pool on the indexer.

You can monitor your workload management configuration, and track CPU and memory usage on a per pool basis, using the workload management dashboards in the Monitoring Console. For more information, see Monitor workload management.

Resource allocation in workload management

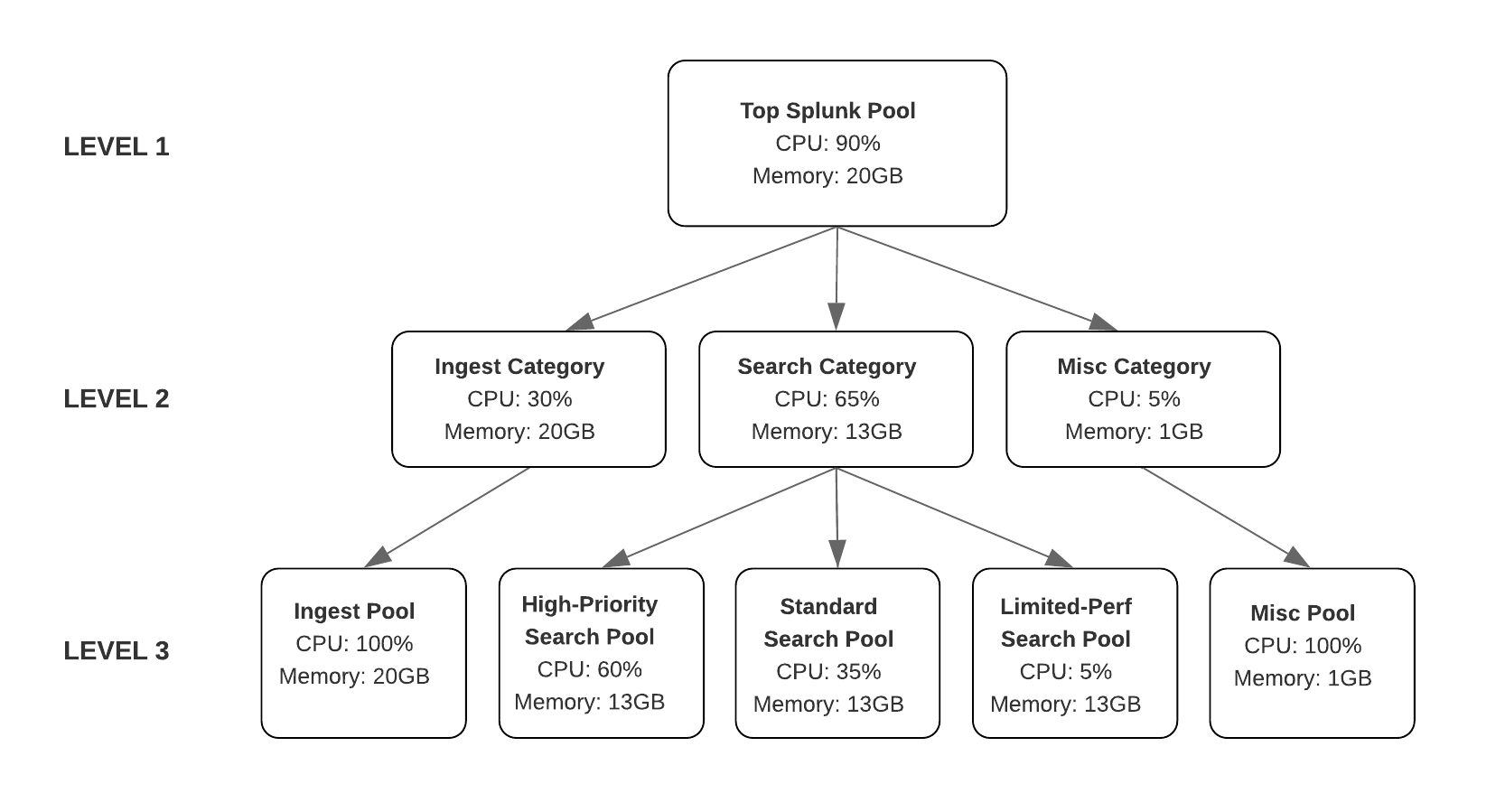

CPU and memory resources are allocated in workload management in a resource pool hierarchy that includes 3 levels:

| Level | Description |

|---|---|

| Level 1: Top Splunk pool | The total amount of system CPU and memory resources allocated to workload management in Splunk Enterprise is based on the cpu and memory resource allocation that you define in Linux cgroups. See cgroups. |

| Level 2: Workload categories | The amount of CPU and memory resources allocated to separate workload categories (search, ingest, and misc). See workload categories. |

| Level 3: Workload pools | The amount of CPU and memory resources allocated to individual workload pools under their respective categories. See workload pools. |

The following diagram illustrates the workload management resource pool hierarchy:

CPU resource allocation

Workload management allocates CPU resources to workload categories based on the CPU weight. The CPU weight determines the relative resource allocation as a percentage of the total CPU weight assigned to all workloads.

For example, if there are three workload pools, pool_A, pool_B, and pool_C, with weights of 10, 20, and 70, these pools have a respective ratio of 10%, 20% and 70% allocation. If you increase the CPU weight for pool_A to 30, then without changing the CPU weights for pool_B and pool_C, the relative resource allocation for the pool_A workload increases from 10% to 30 / (30+20+70) = 25%.

CPU is a compressible resource because it can be throttled for respective workload pools based on the assigned weight. If the workload pool's weight is increased then it receives more CPU share whereas if the workload pool's weight is decreased, then it is throttled to receive less CPU share. Since the resource share can be dynamically updated, the CPU resource is considered a compressible resource.

If an individual workload pool exceeds its allocated CPU %, it is considered a soft limit, and that pool can borrow available CPU resources from other pools in the category, if other workload pools have unused CPU resources.

Memory resource allocation

The amount of memory allocated to a workload pool is defined as the maximum percentage of total available memory across all workload pools. When you allocate memory to an individual workload pool, workload management calculates the amount of memory available to the pool as an absolute percentage of the total memory allocated to the category.

Memory is an incompressible resource because it cannot be throttled for respective workload pools without causing a failure. The workload pool can only use memory up to the limit for which it has been configured. Since the resource share cannot be dynamically updated, the memory resource is considered an incompressible resource. When a workload pool exceeds its memory limit, the Linux OS kills the search process based on the OOM score.

If an individual workload pool exceeds its allocated memory limit, it cannot borrow memory from other workload pools, even if the other workload pools are not fully utilizing the allocated memory.

Search and ingest process isolation

Workload management provides separate search and ingest categories to ensure resource isolation between search processes and ingest processes. Search and ingest process isolation makes it possible to allocate resources to any number of search pools without affecting the resource allocation to the ingest category. See workload categories.

Concepts

The following concepts are useful to understand before you configure and use workload management.

workload categories

There are three predefined workload categories that run predefined workloads. You can change the default resource allocation of each category.

- Search: All searches, including scheduled searches, ad hoc searches, accelerated reports, accelerated data models, and summary indexing run in this category. You can create multiple workload pools under this category.

- Ingest: Indexing and Splunk core processes, including splunkd, process runner, KV store, app server, and introspection run in this category.

- Misc: Scripted inputs and modular run in this category.

For detailed information on how to allocate CPU and memory resources to workload categories, see Configure workload categories.

workload pools

A workload pool is a specified amount of CPU and memory resources that you can define and allocate to workloads in Splunk Enterprise. Each workload pool belongs to a workload category and contains a subset of the total CPU and memory available in its parent category. You must define a workload pool for each category. You can define more than one pool for the Search category.

For detailed information on how to create and use workload pools, see Create workload pools.

workload rules

A workload rule contains a user-defined condition based on a set of predicates. For example, role=security AND search_type=adhoc. A rule is triggered when the user-defined condition is met. Workload rules can automatically place a search in a workload pool or monitor and perform remediation actions on running searches.

For detailed information on how to define and use workload rules, see Configure workload rules.

admission rules

Admission rules filter out searches automatically based on a condition that you define. If a search meets the specified condition, the search is not executed. Admission rules can prevent the execution of poorly written searches, such as those using wildcards or the "all time" time range, which can consume an excessive amount of resources and interfere with search workloads.

For instructions on how to create admission rules, see Configure admission rules to prefilter searches.

cgroups

cgroups (control groups) is a Linux kernel feature that lets you allocate a specified amount of system resources to a group of processes. Workload pools in Splunk Enterprise are an abstraction of the underlying functionality of Linux cgroups.

Before you can configure workload management in Splunk Enterprise, you must set up cgroups on your Linux operating system. For more information, see Set up Linux for workload management.

Workload management supports Linux cgroups v1 only. If your Linux system has been upgraded to a version that runs cgroups v2 by default, you must revert your system to cgroups v1 to use workload management in Splunk Enterprise.

systemd

systemd is a system startup and service manager for Linux operating systems that organizes processes under cgroups. systemd uses instructions for a daemon specified in a unit configuration file. You can configure this unit file to run splunkd as a systemd service.

For information on how to configure systemd for workload management, see Configure Linux systemd for workload management.

| About workload management | System requirements |

This documentation applies to the following versions of Splunk® Enterprise: 8.1.0, 8.1.1, 8.1.2, 8.1.3, 8.1.4, 8.1.5, 8.1.6, 8.1.7, 8.1.8, 8.1.9, 8.1.10, 8.1.11, 8.1.12, 8.1.13, 8.1.14, 8.2.0, 8.2.1, 8.2.2, 8.2.3, 8.2.4, 8.2.5, 8.2.6, 8.2.7, 8.2.8, 8.2.9, 8.2.10, 8.2.11, 8.2.12, 9.0.0, 9.0.1, 9.0.2, 9.0.3, 9.0.4, 9.0.5, 9.0.6, 9.0.7, 9.0.8, 9.0.9, 9.0.10, 9.1.0, 9.1.1, 9.1.2, 9.1.3, 9.1.4, 9.1.5, 9.1.6, 9.1.7, 9.1.8, 9.1.9, 9.2.0, 9.2.1, 9.2.2, 9.2.3, 9.2.4, 9.2.5, 9.2.6, 9.3.0, 9.3.1, 9.3.2, 9.3.3, 9.3.4, 9.4.0, 9.4.1, 9.4.2

Download manual

Download manual

Feedback submitted, thanks!