Cluster Numeric Events Experiment Assistant workflow

The Cluster Numeric Events Assistant partitions events with multiple numeric fields into groups of events based on the values of those fields.

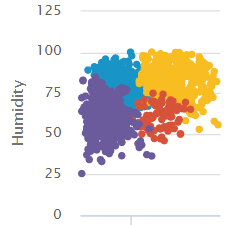

The following visualization illustrates a clustering of humidity data results. This visualization is from the showcase example for Power Plant Operating Regimes.

Available algorithms

The Cluster Numeric Events Experiment Assistant uses the following algorithms:

Create an Experiment to cluster numeric events

The Cluster Numeric Events Assistant partitions events with multiple numeric fields into groups of events based on the values of those fields. The groupings aren't known in advance, therefore, the learning is unsupervised.

To cluster numeric events, input data, optionally perform preprocessing, then select the algorithm to use for clustering and other parameters as necessary.

Before you begin

- The Cluster Numeric Events Assistant offers the option to preprocess your data. For more information on Assistant-based preprocessing algorithms, see Preprocessing machine data using Assistants.

- MLTK default selects the K-means algorithm. Use this default algorithm if you aren't sure which algorithm is best for you. For further details on any algorithm, see Algorithms in the Machine Learning Toolkit.

Assistant workflow

Follow these steps to create a Cluster Numeric Events Experiment.

- From the MLTK navigation bar, click Experiments.

- If this is the first Experiment in MLTK, you will land on a display screen of all six Assistants. Select the Cluster Numeric Events block.

- If you have at least one Experiment in MLTK, you will land on a list view of Experiments. Click the Create New Experiment button.

- Fill in an Experiment Title, and add a description. Both the name and description can be edited later if needed.

- Click Create.

- Run a search, and be sure to select a date range.

- (Optional) Click + Add a step to add preprocessing steps

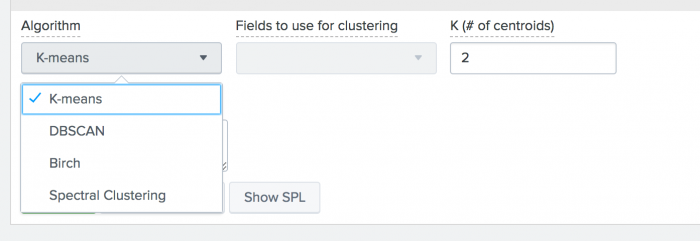

- Select an algorithm from the

Algorithmdrop-down menu. K-means is selected by default. Another algorithm option may better fit your Experiment.

.

.

- Specify the

Fields to use for clustering. Use the following table to guide your next steps:

If Then Data has been preprocessed Choose from the preprocessed fields. Algorithm of K-means, Birch or Spectral Clustering Specify the number of clusters to use. Algorithm of DBSCAN Specify a value between 0 and 1 for eps(the size of the neighborhood). Smaller numbers result in more clusters. - (Optional) Add notes to this Experiment. Use this free form block of text to track the selections made in the Experiment parameter fields. Refer back to notes to review which parameter combinations yield the best results.

- Click Cluster. The experiment is now in a Draft state.

Draft versions allow you to alter settings without committing or overwriting a saved Experiment. An Experiment is not stored to Splunk until it is saved.

The following table explains the differences between a draft and saved Experiment:Action Draft Experiment Saved Experiment Create new record in Experiment history Yes No Run Experiment search jobs Yes No (As applicable) Save and update Experiment model No Yes (As applicable) Update all Experiment alerts No Yes (As applicable) Update Experiment scheduled trainings No Yes

You cannot save a model if you use the DBSCAN or Spectral Clustering algorithm.

Interpret and validate results

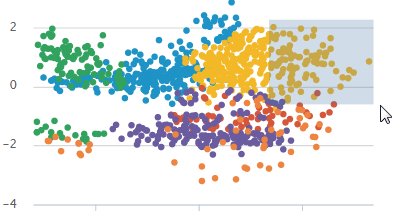

After the numeric events are clustered, review the cluster visualization. The fields included in the visualization are listed on screen. You can add and remove fields, and click Visualize to change the visualization.

You can drag a selection rectangle around some of the points in a plot to see the corresponding points on the other plots.

The visualization displays a maximum of 1000 points, 20 series and 6 fields (1 label and 5 variables).

Save the Experiment

Once you are getting valuable results from your Experiment, save it. Saving your Experiment results in the following actions:

- Assistant settings saved as an Experiment knowledge object.

- The Draft version saves to the Experiment Listings page.

- Any affiliated scheduled trainings and alerts update to synchronize with the search SPL and trigger conditions.

You can load a saved Experiment by clicking the Experiment name.

Deploy the Experiment

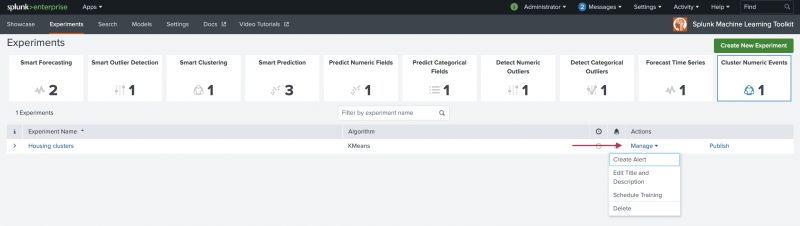

Saved cluster numeric events Experiments include options to manage and publish.

The options to create alerts or to schedule training are not available if you use the DBSCAN or Spectral Clustering algorithms.

Within the Experiment framework

From within the framework, you can both manage and publish your Experiments. To manage your Experiment, perform the following steps:

- From the MLTK navigation bar, choose Experiments. A list of your saved Experiments populates.

- Click the Manage button available under the Actions column.

The toolkit supports the following Experiment management options:

- Create and manage Experiment-level alerts. Choose from both Splunk platform standard trigger conditions, as well as from Machine Learning Conditions related to the Experiment.

- Edit the title and description of the Experiment.

- Schedule a training job for an Experiment.

- Delete an Experiment.

Updating a saved Experiment can affect affiliates alerts. Re-validate your alerts once you complete the changes. For more information about alerts, see Getting started with alerts in the Splunk Enterprise Alerting Manual.

You can publish your Experiment through the following steps:

- From the MLTK navigation bar, choose Experiments. A list of your saved Experiments populates.

- Click the Publish button available under the Actions column.

Publishing an Experiment model means the main model and any associated preprocessing models will be copied as lookup files in the user's namespace within a selected destination app. Published models can be used to create alerts or schedule model trainings.

The Publish link will only show if you have created the Experiment using KMeans or Birch algorithms, and run the cluster action.

- Give the model a title. It must start with letter or underscore, and only have letters, numbers and underscores in the name.

- Select the destination app.

- Click Save.

- A message will let you know whether the model published, or why the action was not completed.

Experiments are always stored under the user's namespace, so changing sharing settings and permissions on Experiments is not supported.

Outside the Experiment framework

- Click Open in Search to to generate a New Search tab for this same dataset. This new search opens in a new browser tab, away from the Assistant.

This search query that uses all data, not just the training set. You can adjust the SPL directly and see results immediately. You can also save the query a Report, Dashboard Panel or Alert. - Click Show SPL to open a new modal window/ overlay showing the search query you used to fit the model. Copy the SPL to use in other aspects of your Splunk instance.

Learn more

To learn about implementing analytics and data science projects using Splunk's statistics, machine learning, and built-in custom visualization capabilities, see the Splunk Education course Splunk 8.0 for Analytics and Data Science.

| Forecast Time Series Experiment Assistant workflow | Creating, sharing, and deleting models in the Splunk Machine Learning Toolkit |

This documentation applies to the following versions of Splunk® Machine Learning Toolkit: 5.3.3, 5.4.0, 5.4.1, 5.4.2, 5.5.0

Download manual

Download manual

Feedback submitted, thanks!