How data gets from the Splunk platform to Splunk UBA

Data is ingested into Splunk UBA from the Splunk platform in the following ways:

- Splunk UBA performs time-based searches against the Splunk platform to pull data in to Splunk UBA. See Time-based search.

- Splunk UBA performs real-time indexed queries against the Splunk platform to pull data in to Splunk UBA. See Real-time search.

- The Splunk platform pushes data to Splunk UBA using Kafka ingestion. See Direct to Kafka.

Time-based search

Splunk UBA performs micro-batched queries in 1-minute intervals against the Splunk platform to pull in events. This is the default method for getting data into Splunk UBA.

Using time-based search enables Splunk UBA to provide monitoring services for the status of your data ingestion. To monitor the status of your data ingestion:

- See the Splunk Data Source Lag indicator in View modules health.

- See the error messages and descriptions in Data Sources (DS).

- Monitor your Splunk UBA instance directly from Splunk Enterprise with the Splunk UBA Monitoring app. See About the Splunk UBA Monitoring app.

To configure the properties of the queries:

- In the

/etc/caspida/local/conf/uba-site.propertiesfile, add or edit the properties in the table. - Run the following command to synchronize the cluster in distributed deployments:

/opt/caspida/bin/Caspida sync-cluster /etc/caspida/local/conf

- Run the following commands to stop and restart Caspida:

/opt/caspida/bin/Caspida stop /opt/caspida/bin/Caspida start

| Property | Description |

|---|---|

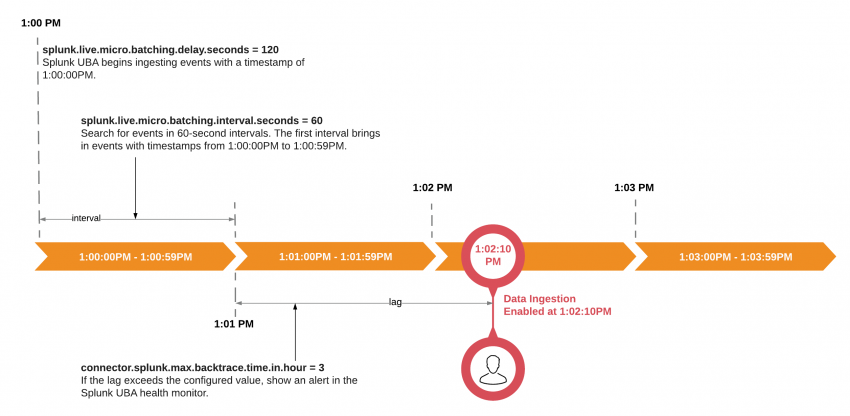

splunk.live.micro.batching.delay.seconds

|

The point in time when Splunk UBA begins data ingestion. The default is 180 seconds (3 minutes) earlier than the start of the current minute. For example, if data ingestion is enabled at 10 seconds past 1:02 PM, then the beginning of the minute is 1:02 PM. Specifying a delay of 120 seconds means that the first batch query begins processing events with a timestamp of 1:00 PM. |

splunk.live.micro.batching.interval.seconds

|

The length of the time in seconds for each batch query.

Do not configure the interval to exceed 4 minutes. |

connector.splunk.max.backtrace.time.in.hour

|

The window of time that determines when to begin data ingestion after a data source is stopped and then restarted. The default backtrace time is 4 hours.

|

The following image shows how the properties are related to each other and used together:

The search windows in Splunk UBA's micro-batch queries are expanded to ingest more events to compensate for lags during data ingestion. Searches are run every minute and for each search that takes less than 60 seconds, the search window is increased by 3 minutes to ingest a greater number of events. This enables Splunk UBA to gradually overcome a data ingestion lag, up to the point where data ingestion is back to the configured initial delay.

If any search takes more than 60 seconds to complete, the search window is reduced by 3 minutes, and the next search is issued immediately at the conclusion of the previous search. This is continued until the search can complete again in less than 60 seconds.

Consider the timeline In the following example, where a data source is stopped at 12:00AM and then restarted again at 1:00AM.

| Search Start Time | Search Duration | Search Time Window | Description of data ingestion |

|---|---|---|---|

| 1:00:00AM | 4 seconds | 1 minute | Ingest events occurring between 12:00AM - 12:01AM. Splunk UBA detects that there is a lag in the data ingestion. Since this search takes less than 60 seconds to complete, so the next search window is increased by 3 minutes. |

| 1:01:00AM | 6 seconds | 4 minutes | Ingest events occurring between 12:01AM - 12:05AM. This search takes less than 60 seconds to complete, so the next search window is increased by 3 minutes. |

| 1:02:00AM | 22 seconds | 7 minutes | Ingest events occurring between 12:05AM - 12:12AM. This search takes less than 60 seconds to complete, so the next search window is increased by 3 minutes. |

| 1:03:00AM | 67 seconds | 10 minutes | Ingest events occurring between 12:12AM - 12:22AM. This search takes longer than 60 seconds to complete:

|

| 1:04:07AM | 61 seconds | 7 minutes | Ingest events occurring between 12:22AM - 12:29AM. This search takes longer than 60 seconds to complete:

|

| 1:05:08AM | 26 seconds | 4 Minutes | Ingest events occurring between 12:29AM - 12:33AM. This search takes less than 60 seconds to complete:

|

| 1:06:08AM | 31 seconds | 7 Minutes | Ingest events occurring between 12:33AM - 12:40AM. |

This process continues until there is no more lag in the data ingestion, at which point the search window is returned to the default interval of 1 minute.

If a data source is stopped for a longer period of time than the configured connector.splunk.max.backtrace.time.in.hour interval, some events will be lost. For example, if a data source was stopped at 12:00AM and not restarted again until 6:00AM, and the connector.splunk.max.backtrace.time.in.hour is 4 hours, Splunk UBA will ingest events that occurred at 2:00AM. The events between 12:00AM and 2:00AM cannot be recovered.

Real-time search

Splunk UBA can perform real-time indexed queries against the Splunk platform to pull in events.

Use this method if you are experiencing problems with the default method of time-based data ingestion.

- Set the following property and value in the

/etc/caspida/local/conf/uba-site.propertiesfile:splunk.live.micro.batching=false

- Synchronize the cluster in distributed deployments:

/opt/caspida/bin/Caspida sync-cluster /etc/caspida/local/conf

This method does not provide any monitoring services for your data ingestion. Only the default time-based search provides data ingestion health monitoring via the health monitor and Splunk UBA Monitoring app.

Direct to Kafka

Use this to push data from the Splunk platform to Splunk UBA when you have a single data source with EPS numbers in excess of 10,000.

See Send data from the Splunk platform directly to Kafka in the Splunk UBA Kafka Ingestion App manual.

How Splunk UBA handles data from different time zones

Splunk UBA uses the _time field as the timestamp for all events ingested from the Splunk platform. By default, the Splunk platform stores the UTC epoch time of the event in the _time field. See How timestamp assignment works in the Splunk Enterprise Getting Data In manual.

If the time zone on the Splunk platform is not configured with UTC epoch time in the _time field, you may see cases where anomalies and threats are generated later than expected.

See Add file-based data sources to Splunk UBA for information about How Splunk UBA handles time zones for file-based data sources.

| Understand data flow in Splunk UBA | Use connectors to add data from the Splunk platform to Splunk UBA |

This documentation applies to the following versions of Splunk® User Behavior Analytics: 5.0.0, 5.0.1, 5.0.2, 5.0.3, 5.0.4, 5.0.4.1

Download manual

Download manual

Feedback submitted, thanks!