Aggregate records in a pipeline

Use the stats function to perform one or more aggregation calculations on your streaming data. For each aggregation calculation that you want to perform, specify the aggregation functions, the subset of data to perform the calculation on (fields to group by), the timestamp field for windowing, and the output fields for the results.

After the given window time has passed, the aggregation function outputs the records in your data stream with the user-defined output fields, the fields to group by, and the window length that the aggregations occurred in. The aggregation function drops all other fields from the event schema.

The stats function has no concept of wall clock time, and the passage of time is based on the timestamps of incoming records. The stats function tracks the latest timestamp it received in the stream as the "current" time, and it determines the start and end of windows using this timestamp. Once the difference between the current timestamp and the start timestamp of the current window is greater than the window length, that window is closed and a new window starts. However, since events may arrive out of order, the grace period argument allows the previous window W to remain "open" for a certain period G after its closing timestamp T. Until we receive a record with a timestamp C where C > T + G, any incoming events with timestamp less than T are counted towards the previous window W.

List of aggregation functions

You can use the following aggregation functions within the Stats streaming function:

- average: Calculates the average in a time window.

- count: Counts the number of non-null values in a time window.

- max: Returns the max value in a time window.

- min: Returns the min value in a time window.

- sum: Returns the sum of values in a time window.

Count the number of times a source appeared per host

Suppose you wanted to count the number of times a source appeared in a given time window per host. This example does the following:

- Use the

countstats function and outputs the result tonum_events_with_source_field. - Groups the outputted fields by

host. - Executes the aggregations in a time-window of 60 seconds based on the timestamp of your record.

- From the Data Pipelines Canvas view, click on the + icon and add the Stats function to your pipeline.

- In the Stats function, add a new Group By. In Field/Expression, type

host, andhostin Output Field. Click OK. - In the Timestamp field, enter

timestamp. - Click on the New Aggregations dropdown, and click count.

- Type

sourcein Field/Expression, and type num_events_with_source_field in Output Field. - Click Validate. After validating, you can see what your record schema will look like after your data passes through the Stats function.

- Click Start Preview and the Stats function to verify that your data is being aggregated. In this example, we are using the default time-window of 60 seconds, so your preview data for Stats shows up after 60 seconds have passed between the timestamps of your records.

Example output

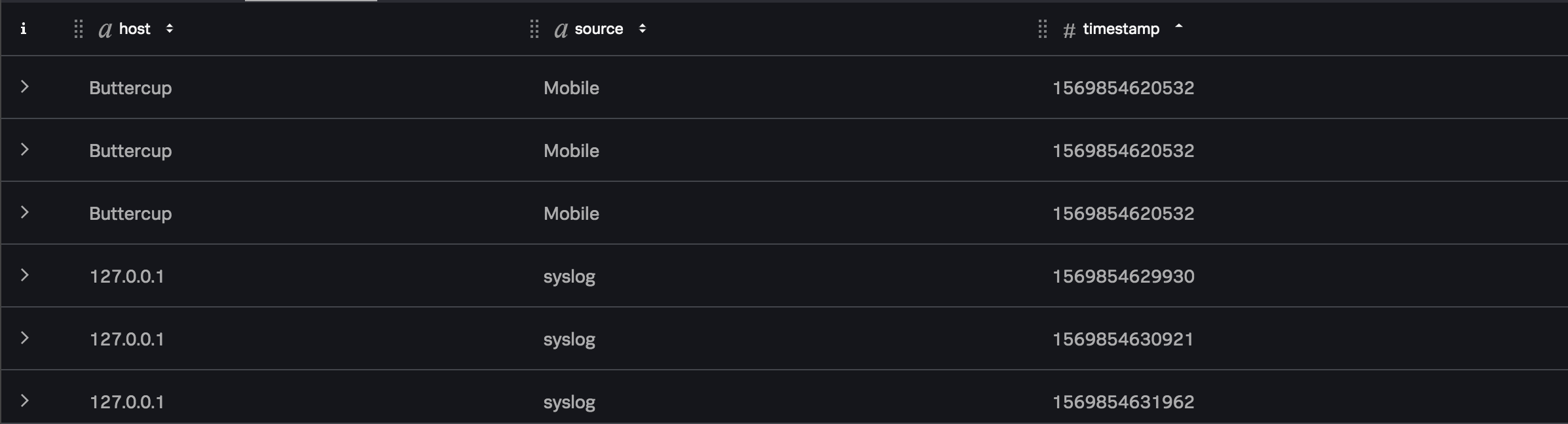

If your data stream contained the following data:

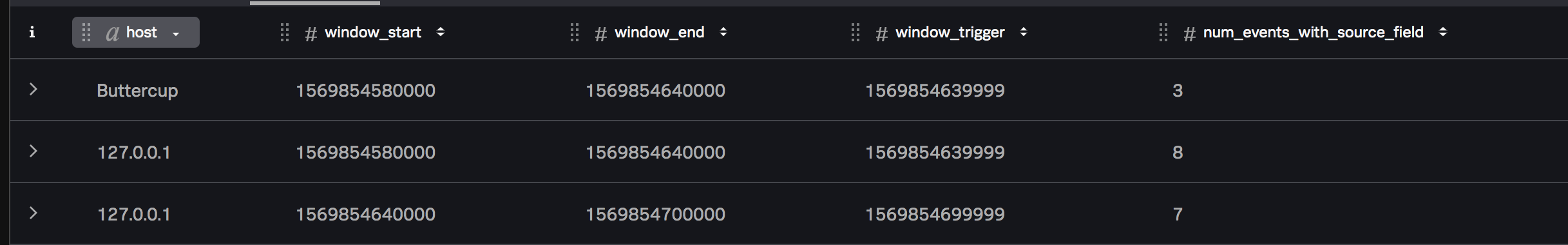

The Stats function outputs records that span the given window size, and writes the results of the aggregate calculations to the num_events_with_source_field field in each record as follows:

| Deserialize and preview data from Kafka | Add a sourcetype |

This documentation applies to the following versions of Splunk® Data Stream Processor: 1.1.0

Download manual

Download manual

Feedback submitted, thanks!