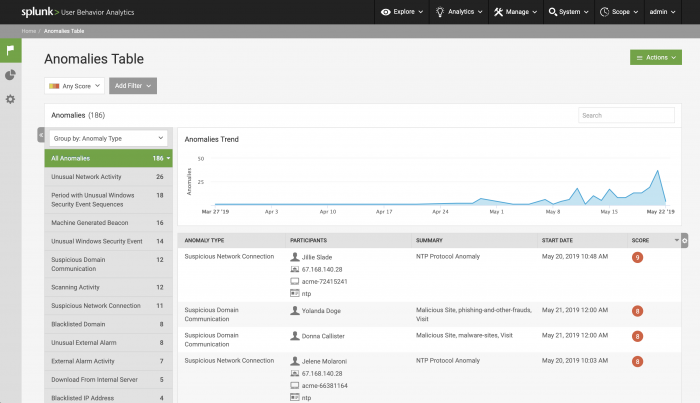

Review anomalies on the anomalies table

The anomalies table provides a view of all current anomalies in your environment. Select Explore > Anomalies to view the table.

About anomalies

Data science models and anomaly rules can generate anomalies. Anomalies are created based on facts identified in your events, and represent suspicious activity. Anomalies are not confirmed evidence of malicious activity. For example:

- The user device exfiltration model looks for events that reveal the data sent out by an application, the data received by an application, the data received by a source zone, and the data sent out to a destination zone. Based on the patterns in those events and a baseline identified in the model, the model creates an anomaly if one of those behaviors is anomalous.

- Anomaly rules look for specific series of events. One rule-based anomaly looks for a specific number of DLP alarms triggered by a user over a period of time.

Take action on anomalies

When viewing anomalies on the anomalies table, you can take action on the anomalies shown. Use the Actions button to delete, change the watchlists, or change the score of any anomaly listed in the table. See Delete anomalies in Splunk UBA for more information about deleting anomalies.

You can also click on the Anomaly Rules (![]() ) icon. From the Anomaly Rules page, you can configure anomaly action rules and anomaly scoring rules.

) icon. From the Anomaly Rules page, you can configure anomaly action rules and anomaly scoring rules.

Add anomalies to a watchlist

For example, add all the anomalies that currently exist with a type of Unusual Machine Access and that are associated with users in the security department to a False Positive watchlist.

- Filter the table to show only the anomalies you want to take action on.

For example, select an anomaly type of Unusual Machine Access, then click Add Filter and select Department and select Security. - Click Actions and select the action you want to take.

For example, select Change Watchlists of Selected. - Fill out the fields as needed.

For example, add the selected anomalies to a watchlist of False Positive because it is typical for employees in the security department to be logging in to unusual machines. - Click OK to save.

- Review the Anomalies by Watchlist panel on the Anomalies dashboard to confirm that anomalies were successfully added to a watchlist.

See Investigate Splunk UBA entities using watchlists for more information about watchlists.

Create anomaly action rules

Create anomaly action rules to take an action automatically in the future. For example, you can affect the future risk scores for all anomalies of a particular type, create rules that delete certain anomalies, or add anomalies to a watchlist. See Take action on anomalies with anomaly action rules for more about anomaly action rules.

Customize anomaly scoring rules

Influence the anomaly scores in your system and provide input to the anomaly's internal scoring logic. By editing existing rules, you can also gain insight into how the scoring for each anomaly is generated and adjust them as neede fro your environment to provide a desired level of consistency. See Customize anomaly scoring rules for more about anomaly scoring rules.

Splunk UBA adjusts threats after you take action on anomalies

After you take action on an anomaly, Splunk UBA compares the changed anomalies to existing threats. This means that after you delete existing anomalies, restore deleted anomalies, or adjust the score of existing anomalies, existing threats can get deleted or re-scored, and new threats can be created.

For example, after you delete one anomaly or several anomalies, Splunk UBA computes threats to remove those that the anomalies contributed to. For a few minutes until threat computation completes, some threats can appear in the system that are no longer threats since they are based on now-deleted anomalies.

Learn more about a specific anomaly

Click an anomaly on the table to open the Anomaly Details page. See Anomaly Details.

Filter the anomaly table

Filter a table and save your filter settings to speed up hunting activities and improve your investigation capabilities. You can filter the table based on the types of users involved in anomalies, the department, and many other factors.

Filter the anomaly table by anomaly type

For example, you want to keep tabs on specific types of machine-generated beacons based on the results of a malware investigation. A specific type of malware is programmed to contact a command and control server every 10 seconds on a regular basis, so you want to identify the servers potentially infected by that malware based on their beaconing activity.

- Open the anomalies table.

- Click Add Filter and select Anomaly Type.

- Select Machine Generated Beacon.

- Click the filter icon for Machine Generated Beacon.

- Select a Period that is equal to 10 seconds.

- Select Yes for Regular Period? to look only for beacons send on a regular basis.

- Click OK.

Quickly filter the table by anomaly type by clicking the filter icon for an anomaly type. Select all in a filter option by pressing shift + click.

Save the filter for future reference and review any anomalies that are currently shown.

Filter the anomaly table by anomaly category

Anomaly categories provide additional context for each anomaly type, providing information such as the anomaly source, scope, and detection type. Instead of filtering for a specific anomaly type, you can filter for all anomalies with Active Directory data.

Filter anomalies by category by performing the following tasks. For example, to see anomalies tagged with malware-related data:

- Open the anomalies table.

- Click Add Filter and select Anomaly Category.

- Select Malware Activity, Malware Install and Malware Persistence. Leave the conditional filters Includes and Contains Any.

| Anomaly Category | Description |

|---|---|

| Anomaly categories that define the anomaly source. | |

| EndPoint Data | Use this category for any anti-virus other endpoint protection system running on a host. |

| Active Directory Data | Use this category for Microsoft's Active Directory. |

| Firewall Data | Use this category for any network-based firewall or system that provides information about network connections beyond hostnames or IP addresses, such as NetFlow, DNS, and web proxy logs. |

| Application Log | Use this category when the data is from a single application log, such as Apache, JIRA, Firefox, or any other application that is monitored. This category should not include general operating system logs. |

| Network IDS/IPS Data | Use this category for network-based intrusion detection systems (IDS) or intrusion prevention systems (IPS), or for external security logic products in general. |

| Cloud Data | Use this category for data from a cloud provider, such as Amazon, Box, or Office365. |

| Badge Data | Use this category for data from physical access control logs. |

| Printer Data | Use this category for any data based on printer logs. |

| Anomaly categories that define the anomaly scope. | |

| Internal | Use this category for detections containing a network component, where both endpoints are considered internal, such as on-prem entities. |

| External Alarm | Use this category for general alarms or other external activity that does not fall under External Attack or External Scan. |

| External Attack | Use this category for attacks originating outside of your organization. |

| External Scan | Use this category for scanning activity originating outside of your organization. |

| Incoming | Use this category when the behavior described by this detection had a network component. For example, when one side was internal, the other was external, and the direction of the behavior is external to internal. |

| Outgoing | Use this category when the behavior described by this detection had a network component. For example, when one side was internal, the other was external, and the direction of the behavior is internal to external. |

| Local | Use this category when the behavior has no network component, such as local privilege escalation or badge based detections. |

| Anomaly categories that define the detection type. | |

| Signature | Use this category for anomalies based on a known attack pattern, such as a configured threshold being breached. |

| Behavior | Use this category for anomalies based on a change in behavior relative to previously observed and tracked patterns. |

| Anomaly categories that define a status. | |

| Allowed | Use this category when the targeted behavior was successful. In general, Splunk UBA assumes that anything not marked Blocked is allowed, but there are cases when you may want to explicitly add this category. For example, if a firewall or other content inspection system allowed a transfer/connection that you believe was hostile, you can add Allowed to point out that it got past a gatekeeper. |

| Blocked | Use this category when the targeted behavior was attempted but not successful. |

| Anomaly categories that define a stage in the MITRE ATT&CK phases. | |

| Recon | Use this category for scanning, DNS enumeration, or other observable attempts to gain information about the your network. |

| Initial Access | Use this category for any vectors that attackers use to gain an initial foothold within a network. |

| Execution | Use this category for any techniques resulting in the execution of an attacker's code on a local or remote system. This tactic is often used in conjunction with initial access to gain lateral movement and expand access to remote systems on a network. |

| Persistence | Use this category for any access, action, or configuration change to a system that gives an attacker a persistent presence on that system. Attackers often need to maintain access to systems through interruptions such as system restarts, loss of credentials, or other failures that would require a remote access tool to restart in order to regain access. |

| Privilege Escalation | Use this category for actions that allow an attacker to obtain a higher level of permissions on a system or network. Certain tools or actions require a higher level of privilege to work and are likely necessary at many points throughout an operation. Attackers can enter a system with unprivileged access, then take advantage of a system weakness to obtain elevated privileges. |

| Defense Evasion | Use this category for techniques an attacker may use to evade detection or avoid defenses. Sometimes these actions are the same as or variations of techniques in other categories that have the added benefit of subverting a particular defense or mitigation. Defense evasion may be considered a set of attributes the attacker applies to all other phases of the operation. |

| Credential Access | Use this category for techniques resulting in access to or control over system, domain, or service credentials that are used within an enterprise environment. Attackers will likely attempt to obtain legitimate credentials from users or administrator accounts (local system administrator or domain users with administrator access) to use within the network. This allows the attacker to assume the identity of the account, with all of that account's permissions on the system and network, and makes it harder for defenders to detect the attacker. With sufficient access within a network, an attacker can create accounts for later use within the environment. |

| Discovery | Discovery consists of techniques that allow the attacker to gain knowledge about the system and internal network. When attackers gain access to a new system, they must orient themselves to what they now have control of and what benefits operating from that system give to their current objective or overall goals during the intrusion. The operating system provides many native tools that aid in this post-compromise information-gathering phase. |

| Lateral Movement | Lateral movement consists of techniques that enable an attacker to access and control remote systems on a network and can, but does not necessarily, include execution of tools on remote systems. Lateral movement techniques can allow an attacker to gather information from a system without needing additional tools, such as a remote access tool. |

| Collection | Collection consists of techniques used to identify and gather information, such as sensitive files, from a target network prior to exfiltration. This category also covers locations on a system or network where the attacker may look for information to exfiltrate. |

| Exfiltration | Use this category for techniques and attributes that result or aid in the attacker removing files and information from a target network. This category also covers locations on a system or network where the attacker may look for information to exfiltrate. |

| Command And Control | Use this category for any method used by an attacker to communicate with systems under their control.

There are many ways an attacker can establish command and control with various levels of covertness, depending on system configuration and network topology. Due to the wide degree of variation available to the attacker at the network level, only the most common factors were used to describe the differences in command and control. There are still a great many specific techniques within the documented methods, largely due to how easy it is to define new protocols and use existing, legitimate protocols and network services for communication. |

| Anomaly categories that define a consequence as a result of the detected behavior. | |

| Infection | Use this category for installation of malware on one of the included entities. |

| Reduced Visibility | Use this category for reduction in log output or other data used for detection, implying a deliberate attempt to hide an attackers actions. |

| Data Destruction | Use this category when data is deleted or encrypted. This is distinctly different from Reduced Visability, because the goal is to destroy the data, not to hide activity. |

| Denial Of Service | Use this category when an asset no longer performs its normal functions. |

| Loss Of Control | Use this category when an asset is no longer under your control. |

| Anomaly categories that define the rarity of an entity. | |

| Rare User | Use this category when a rare user is involved in the detection. This will typically be applied to behavioral detection based on other entities such as devices, printers, or services. |

| Rare Process | Use this category for rare processes or applications. This can apply to app detection like PAN or Blue Coat, process names, or process IDs. |

| Rare Device | Use this category when there are rare physical devices or virtual machines. This can be set if you are working with endpoint data, relying on identity resolution, or have a way to identify individual machines/instances. If you are relying on IP/Network or domains for determination, the more specific Rare Network or Rare Domain should be used. |

| Rare Domain | Use this category when rare domains are involved, such as Active Directory domains, a DNS domain, a Kerberos realm, or a tenant identifier. |

| Rare Network | Use this category for rare networks, based on IP address, network blocks, firewall zones, NetFlow interfaces, or other indicators based on routing packets. |

| Rare Location | Use this category when there was a rare location, such as a GeoLocation based on an IP address/Endpoint, or a physical location based on badge data. |

| Anomaly categories that define additional metadata. | |

| Peer Group | Use this category when the behavioral detection has also considered the behavior of the user's peers. |

| Brute Force | Use this category when there is an attempt to gain access to a system by making a high volume of login attempts. |

| Policy Violation | Use this category when there is a violation of company policy. |

| Blacklisted | Use this category when an external source has reported indicators of compromise, threatening locations, or other security threats. |

| External | Use this category to refer to the detection source, not to company boundaries. For example, the detection code may report that another Splunk model or source has marked an entity as Blacklisted. |

| Flight Risk | Use this category for anomalies that indicate a user may plan on leaving the organization. |

| Removable Storage | Use this category for anomalies that detect USB drives or other physical storage devices capable of easy removal. |

| Investigate suspicious activity as a hunter | Use event drilldown to review an anomaly's raw events |

This documentation applies to the following versions of Splunk® User Behavior Analytics: 5.0.0, 5.0.1, 5.0.2, 5.0.3, 5.0.4, 5.0.4.1, 5.0.5, 5.0.5.1, 5.1.0, 5.1.0.1

Download manual

Download manual

Feedback submitted, thanks!