Migration guide for OpenTelemetry Java 2.x metrics 🔗

OpenTelemetry Java Instrumentation 2.x contains a set of breaking changes, introduced as part of recent OpenTelemetry HTTP semantic convention updates. Versions 2.5.0 and higher of the Splunk Distribution of OpenTelemetry Java are fully compatible with the updated semantic conventions.

The following migration instructions assume the following:

You’re sending Java application metrics using version 1.x of the Splunk Distribution of OpenTelemetry Java.

You’ve created custom dashboards, detectors, or alerts based on Java application metrics.

Follow the steps in this guide to migrate to 2.x metrics and HTTP semantic conventions, and to convert your custom reporting elements to the new metrics format.

Note

Version 2.x of the Java agent collects metrics and logs by default. This might result in increased data ingest costs.

Caution

The default protocol changed in the Java 2.0 instrumentation from gRPC to http/protobuf. For custom configurations, verify that you’re sending data to http/protobuf endpoints. For troubleshooting guidance, see Telemetry export issues.

Prerequisites 🔗

To migrate from OpenTelemetry Java 1.x to OpenTelemetry Java 2.x, you need the following:

Splunk Distribution of OpenTelemetry Collector version 0.98 or higher deployed

Administrator permissions in Splunk Observability Cloud. See Splunk Observability Cloud matrix of roles and capabilities

If you’re instrumenting your Java services using the Splunk Distribution of OpenTelemetry Java 1.x or the equivalent upstream instrumentation, you can already migrate to the version 2.5.0 and higher of the Java agent.

Note

AlwaysOn Profiling metrics are not impacted by this change.

Migration best practices 🔗

The following best practices can help you when initiating the migration process:

Familiarize yourself with this documentation.

Read the release notes. See Releases on GitHub.

Use a development or test environment.

Migrate production services gradually and grouped by type.

Identify changes in your instrumentation settings.

Validate the data in Splunk Observability Cloud.

Verify the impact of HTTP semantic convention changes. See Migrate APM custom reporting to OpenTelemetry Java Agent 2.0.

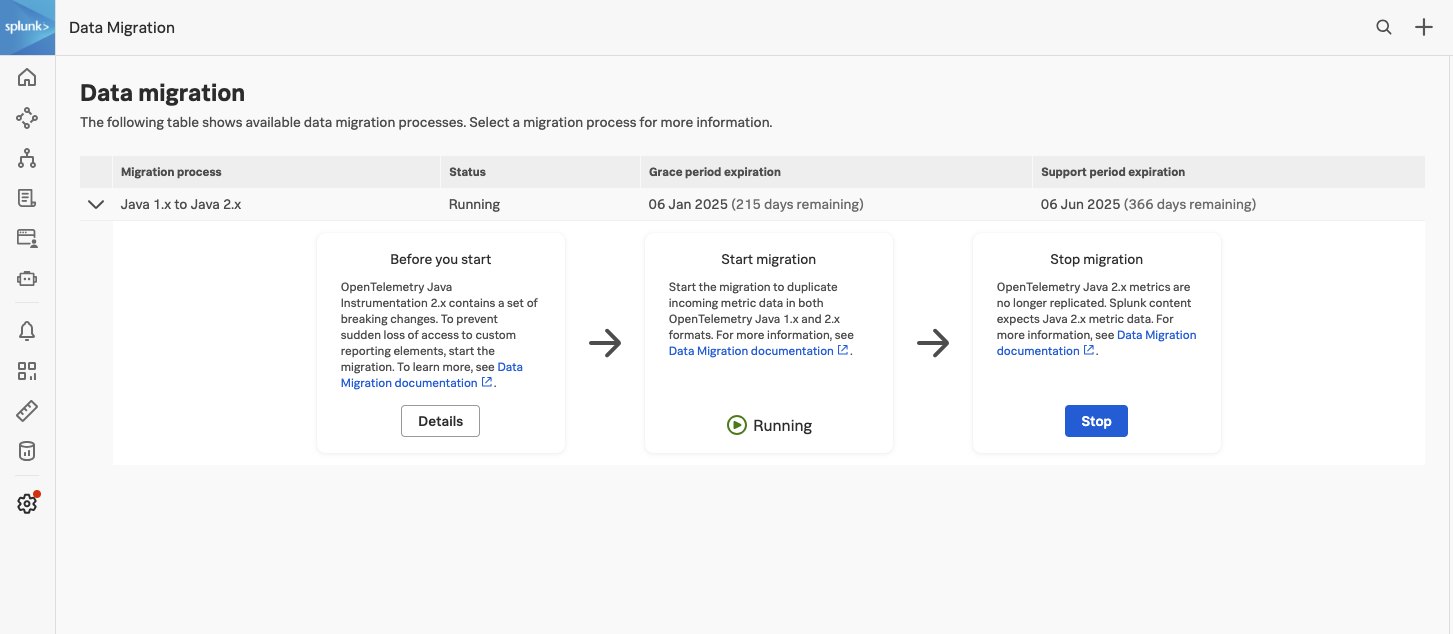

Use the Data Migration tool 🔗

Due to the changes in metric names, upgrading to Java OTel 2.x might break existing dashboards, detectors, and other features. To prevent sudden loss of access to custom reporting elements, use the Data Migration tool, which transforms and duplicates metric data from the new 2.x semantic conventions into the legacy 1.x format for a limited period of time at no additional cost.

To access the Data Migration tool:

Go to Settings, Data Configuration.

Select Data Migration.

Inside the Start migration card, select Start.

For each supported process, you can turn on and off the data migration, see the number of Metric Time Series (MTS) migrated, and view the grace period duration. The duplication and double-publishing of metrics follows a set of predefined rules that are activated when you decide to migrate.

Note

Metric rules are treated as system rules and can’t be edited.

Grace period 🔗

The grace period for receiving and processing duplicated metrics at no additional cost lasts 6 months, starting with the release of the Java agent version 2.5.0 on June 25, 2024 and ending on January 31, 2025.

Migration support is available for 12 months after the release of version 2.5.0 and will be deprecated after 18 months.

Note

After the grace period, duplicated metric data is billed as custom metric data. Make sure to turn off the Data Migration action after you’ve completed the migration to avoid surcharges.

Migrate to OTel Java 2.x 🔗

To migrate your instrumentation to the version 2.5.0 or higher of the Java agent, follow these steps:

Turn on the migration of Java metrics in the Data Migration page:

Go to Settings, Data Configuration.

Select Data Migration.

Inside the Start migration card, select Start.

Turn on OTLP histograms in the Splunk Distribution of the OpenTelemetry Collector.

To send histogram data to Splunk Observability Cloud, set the

send_otlp_histogramsoption totrue. For example:exporters: signalfx: access_token: "${SPLUNK_ACCESS_TOKEN}" api_url: "${SPLUNK_API_URL}" ingest_url: "${SPLUNK_INGEST_URL}" sync_host_metadata: true correlation: send_otlp_histograms: true

Make sure version 2.5.0 or higher of the Splunk Distribution of the Java agent is installed. See Upgrade the Splunk Distribution of OpenTelemetry Java.

If you defined a custom Collector endpoint for metrics, make sure to update the port and use the correct property:

# Legacy property and value: -Dsplunk.metrics.endpoint=http(s)://collector:9943 # You can also use the OTEL_EXPORTER_OTLP_METRICS_ENDPOINT environment variable -Dotel.exporter.otlp.metrics.endpoint=http://localhost:4318/v1/metrics

Review all others settings to check that they’re still applicable to version 2.5.0. See Configure the Java agent for Splunk Observability Cloud.

Migrate your custom reporting elements:

For Splunk APM, see Migrate APM custom reporting to OpenTelemetry Java Agent 2.0.

(Optional) Start using the new Java metrics 2.x built-in dashboards. Built-in dashboard versions are available for Java service metrics representing metrics from versions 1.x and 2.x.

When ready, turn off the migration:

Go to Settings, Data Configuration.

Select Data Migration.

In the Stop migration card, select Stop.

Caution

If you don’t turn off the Data Migration stream for Java metrics after the grace period, the duplicated metrics are billed as custom metrics. See Use the Data Migration tool.

New metric names for version 2.x 🔗

The following table shows the metrics produced by default by OpenTelemetry Java 2.0 and higher, together with their legacy equivalent from version 1.x.

OTel Java 2.0 metric |

Legacy metric (1.x) |

|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

* This is a Splunk-specific metric and it’s not present in the upstream semantic conventions.

Note

The previous table contains metrics generated by default. Additional metrics might be emitted by supported metrics instrumentation, for example when instrumenting application servers.

For more information on the HTTP semantic convention changes, see HTTP semantic convention stability migration guide on GitHub.

Metrics no longer reported 🔗

Due to changes in the metrics emitted by the Java instrumentation version 2.5.0 and higher, detectors or dashboards that use the following metrics might not work as before the migration:

db.pool.connectionsexecutor.tasks.completedexecutor.tasks.submittedexecutor.threadsexecutor.threads.activeexecutor.threads.coreexecutor.threads.idleexecutor.threads.maxruntime.jvm.memory.usage.after.gcruntime.jvm.gc.memory.promotedruntime.jvm.gc.overheadruntime.jvm.threads.peakruntime.jvm.threads.states

Built-in dashboards 🔗

Use the following links to access the built-in OTel Java 2.X APM services dashboards:

Service : Service-level indicators from APM tracing data.

Service Endpoint : Endpoint-level indicators from APM tracing data.

Java runtime metrics (Otel 2.X) Metrics from instrumented services.

Optionally, you can navigate to the dashboards on your own:

In the left navigation menu, select Dashboards.

In the Built-in dashboard groups section, scroll down to the APM Java services (OTel 2.X) dashboard group, where the three dashboards are grouped.

Note

To view the built-in dashboards, the jvm.classes.loaded metric must be received by Splunk Observability Cloud.

Troubleshooting 🔗

If you are a Splunk Observability Cloud customer and are not able to see your data in Splunk Observability Cloud, you can get help in the following ways.

Available to Splunk Observability Cloud customers

Submit a case in the Splunk Support Portal .

Contact Splunk Support .

Available to prospective customers and free trial users

Ask a question and get answers through community support at Splunk Answers .

Join the Splunk #observability user group Slack channel to communicate with customers, partners, and Splunk employees worldwide. To join, see Chat groups in the Get Started with Splunk Community manual.